Don’t look now, but Brain Corp operates over 20,000 of its robots in factories, supermarkets, schools and warehouses, taking on time-consuming assignments like cleaning floors, taking inventory, restocking shelves, etc. And BrainOS®, the AI software platform that powers these autonomous mobile robots, doesn’t just run in the robots themselves — it runs in the cloud. Specifically, Google Cloud.

But that wasn’t always the case. Brain Corp recently partnered with Google Cloud to migrate its robotics platform from Amazon EKS to Google Kubernetes Engine (GKE) Autopilot. Running thousands of robots in production comes with tons of operational challenges, and Brain Corp. needed a way to reduce the day-to-day ops and security maintenance overhead that building a platform on Kubernetes (k8s) usually entails.

From our partners:

Just by turning on Autopilot, they’ve offloaded all the work of keeping clusters highly available and patched with the latest security updates to Google Cloud Site Reliability Engineers (SREs) — a huge chunk of work. Brain Corp’s ops team can now focus on migrating additional robots to the new platform, not just “keeping the lights on” with their k8s clusters.

Making the switch to Google Cloud

When Brain Corp decided to migrate off of EKS, they set out to find a cloud that had the best technology, tools, and platform to easily integrate data and robotics. Brain Corp’s Cloud Development team began by trying to implement a proof-of-concept architecture to support their robots on Google Cloud and another cloud provider. It became clear that Google Cloud was the right choice when it only took a week to get the POC up and running, whereas on the other cloud provider it took a month.

During the POC, Brain Corp realized benefits beyond ease of use. Google’s focus on simple integration between its data products contributed significantly to moving from POC to production. Brain Corp was able to offload Kubernetes operational tasks to Google SREs using GKE Autopilot, which allowed them to focus on migrating robots to their new platform on Google Cloud.

Making the switch to GKE Autopilot

Alex Gartner, Cloud Infrastructure Lead at Brain Corp, says his team is responsible for “empowering developers to develop and deploy stuff quickly without having to think too hard about it.” On EKS, Brain Corp had dedicated infrastructure engineers who did nothing but manage k8s. Gartner was expecting to have his engineers do the same on standard GKE, but once he got a whiff of Autopilot, he quickly changed course. Because GKE Autopilot clusters are secure out of the box and supported by Google SREs, Brain Corp was able to reduce their operations cost and provide a better and more secure experience for their customers.

Another reason for switching to Autopilot was that it provided more guardrails for developer environments. In the past, Brain Corp development environments might experience cluster outages because of a small misconfiguration. “With Autopilot, we don’t need to read every line of the docs on how to provision a k8s cluster with high availability and function in a degraded situation,” Gartner said. He noted that without Autopilot they would have to spend a whole month evaluating GKE failure scenarios to achieve the stability Autopilot provides by default. “Google SREs know their service better than we do so they’re able to think of failure scenarios we’ve never considered,” he said, much less replicate. For example, Brain Corp engineers have no real way to simulate out-of-quota or out-of-capacity scenarios.

How has GKE Autopilot helped Brain Corp?

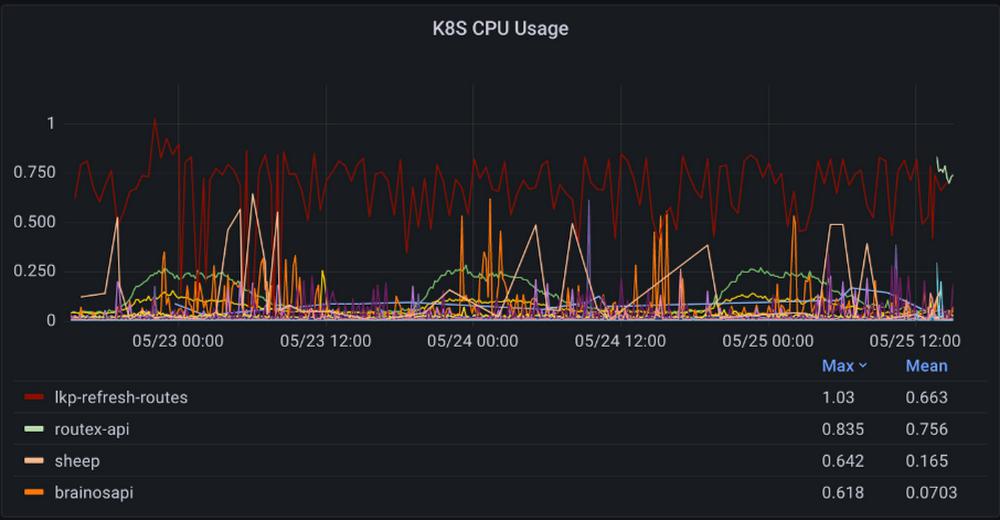

Since adopting Autopilot, the Cloud Infrastructure team at Brain Corp has received fewer pages in the middle of the night because of a cluster or service going down unexpectedly. The clusters are scaled and maintained by Google Cloud. By imposing high-level guardrails on the cluster that you can’t disable, Autopilot “provides a better blast shield by default,” Gartner said. It also makes collecting performance metrics and visualizing them in Grafana dashboards drastically easier, since it exports tons of k8s and performance metrics by default. “Now we don’t need to spend time gathering or thinking about how to collect that information,” he said.

Autopilot has also improved the developer experience for Brain Corp’s software engineers. They run a lot of background computing jobs and traditionally have not been able to easily fine-tune pod-level cost and compute requirements. Autopilot’s per-pod billing increases transparency, allowing devs to know exactly how much their jobs cost. They’ve also been able to easily orient compute requirements to the pods themselves. Billing at the app level instead of the cluster level makes chargeback easier than overprovisioning a cluster that five teams use and figuring out how to split the bill. “We don’t want to spend time optimizing k8s billing,” Gartner said. Cutting costs has been a huge advantage of switching to Autopilot. According to Gartner, there’s a “5-10% overhead you get billed for by just running a k8s node that we are not billed for anymore. We’re only paying for what our app actually uses.”

How can Autopilot improve?

GKE Autopilot launched last year, and isn’t at full feature parity with GKE yet. For example, certain scientific workloads require or perform better using specific CPU instruction sets. “GPU support is something we would love to see,” Gartner said. Even so, the benefits of GKE Autopilot over EKS far outweighed the limitations, and in the interim, they can spin up GKE Standard clusters for specialized workloads.

With all the extra cycles that GKE Autopilot gives back to Brain Corp’s developers and engineers, they have lots of time to dream up new things that robots can do for us — watch this space.

Curious about GKE and GKE Autopilot? Check out Google Cloud’s KubeCon talks available on-demand.

By: Chris Willis (Customer Engineer)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!