Pub/Sub’s ingestion of data into BigQuery can be critical to making your latest business data immediately available for analysis. Until today, you had to create intermediate Dataflow jobs before your data could be ingested into BigQuery with the proper schema. While Dataflow pipelines (including ones built with Dataflow Templates) get the job done well, sometimes they can be more than what is needed for use cases that simply require raw data with no transformation to be exported to BigQuery.

Starting today, you no longer have to write or run your own pipelines for data ingestion from Pub/Sub into BigQuery. We are introducing a new type of Pub/Sub subscription called a “BigQuery subscription” that writes directly from Cloud Pub/Sub to BigQuery. This new extract, load, and transform (ELT) path will be able to simplify your event-driven architecture. For Pub/Sub messages where advanced preload transformations or data processing before landing data in BigQuery (such as masking PII) is necessary, we still recommend going through Dataflow.

From our partners:

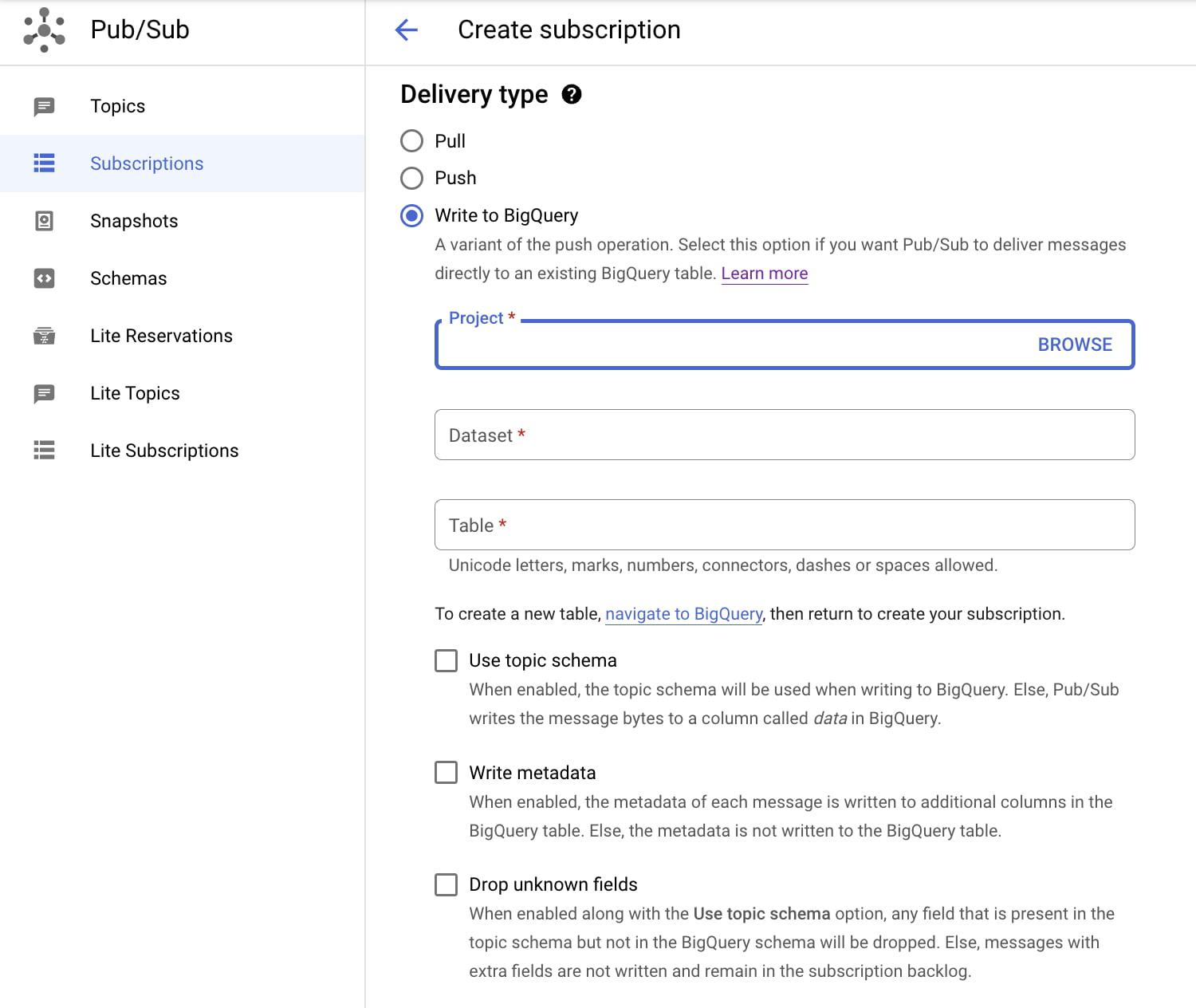

Get started by creating a new BigQuery subscription that is associated with a Pub/Sub topic. You will need to designate an existing BigQuery table for this subscription. Note that the table schema must adhere to certain compatibility requirements. By taking advantage of Pub/Sub topic schemas, you have the option of writing Pub/Sub messages to BigQuery tables with compatible schemas. If schema is not enabled for your topic, messages will be written to BigQuery as bytes or strings. After the creation of the BigQuery subscription, messages will now be directly ingested into BigQuery.

Better yet, you no longer need to pay for data ingestion into BigQuery when using this new direct method. You only pay for the Pub/Sub you use. Ingestion from Pub/Sub’s BigQuery subscription into BigQuery costs $50/TiB based on read (subscribe throughput) from the subscription. This is a simpler and cheaper billing experience compared to the alternative path via Dataflow pipeline where you would be paying for the Pub/Sub read, Dataflow job, and BigQuery data ingestion. See the pricing page for details.

To get started, you can read more about Pub/Sub’s BigQuery subscription or simply create a new BigQuery subscription for a topic using Cloud Console or the gcloud CLI.

By: Qiqi Wu (Product Manager)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!