Over the years, several myths have emerged about performance on Android. While some myths can be entertaining or amusing, being sent in the wrong direction when looking to create performant Android apps is no fun.

In this blog post, in the spirit of MythBusters, we’re going to test these myths. For our myth-busting, we use real-world examples and tools that you can use. We focus on dominant use patterns: the things that you, as developers, are probably doing in your app. There is an important caveat, remember it’s essential to measure before deciding to use a coding practice for performance reasons. That said, let’s bust some myths.

From our partners:

Myth 1: Kotlin apps are bigger and slower than Java apps

The Google Drive team has converted their app from Java to Kotlin. This conversion involved more than 16,000 lines of code over 170 files covering more than 40 build targets. Among the metrics the team monitors, one of the first was startup time.

As you can see, the conversion to Kotlin had no material effect.

Indeed, across the entire benchmark suite, the team observed no performance difference. They did see a tiny increase in the compile-time and compiled code size, but at about 2% there was no significant impact.

On the benefit side, the team achieved a 25% reduction in lines of code. Their code is cleaner, clearer, and easier to maintain.

One thing to note about Kotlin is that you can and should be using code shrinking tools, such as R8, which even has specific optimizations for Kotlin.

Myth 2: Getters and setters are expensive

Some developers opt for public fields instead of using setters and getters for performance reasons. The usual code pattern looks like this, with getFoo as our getter:

public class ToyClass { public int foo; public int getFoo() { return foo; }}ToyClass tc = new ToyClass();

We compared this with using a public field, tc.foo, where the code breaks object encapsulation to access the fields directly.

We benchmarked this using the Jetpack Benchmark library on a Pixel 3 with Android 10. The benchmark library provides a fantastic way to test your code easily. Among the library’s features is that it prewarms the code, so the results represent stable numbers.

So, what did the benchmarks show?

The getter version performs as well as the version going to the field directly. This result isn’t a surprise as the Android Runtime (ART) inlines all trivial access methods in your code. So the code that gets executed after JIT or AOT compilation is the same. Indeed, when you access a field in Kotlin — in this example, tc.foo — you’re accessing that value using a getter or a setter depending on the context. However, because we inline all accessors, ART has you covered here: there is no difference in performance.

If you’re not using Kotlin, unless you have a good reason to make fields public, you shouldn’t break the good encapsulation practices. It’s useful to hide the private data of your class, and you don’t need to expose it just for performance reasons. Stick with getters and setters.

Myth 3: Lambdas are slower than inner classes

Lambdas, especially with the introduction of streaming APIs, are a convenient language construct that enables very concise code.

Let’s take some code where we sum the value of some inner fields from an array of objects. First, using the streaming APIs with a map-reduce operation.

ArrayList<ToyClass> array = build();int sum = array.stream().map(tc -> tc.foo).reduce(0, (a, b) -> a + b);

Here, the first lambda converts the object to an integer and the second one sums the two values that it produces.

This compares to defining equivalent classes for the lambda expressions.

ToyClassToInteger toyClassToInteger = new ToyClassToInteger();SumOp sumOp = new SumOp();int sum = array.stream().map(toyClassToInteger).reduce(0, sumOp);

There are two nested classes: one is toyClassToInteger that converts the objects to an integer, and the second is the sum operation.

Clearly, the first example, the one with lambdas, is much more elegant: most code reviewers will likely say go with the first option.

However, what about performance differences? Again we used the Jetpack Benchmark library on a Pixel 3 with Android 10 and we found no performance difference.

You can see from the graph that we also define a top-level class, and there was no difference in performance there either.

The reason for this similarity in performance is that lambdas are translated to anonymous inner classes.

So, instead of writing inner classes go for lambda: it creates much more concise, cleaner code that your code reviewers will love.

Myth 4: Allocating objects is expensive, I should use pools

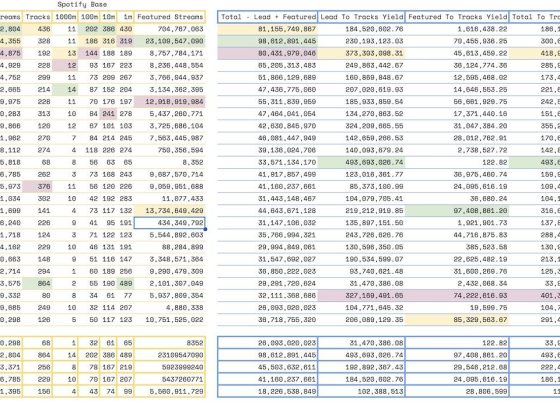

Android uses state of the art memory allocation and garbage collection. Object allocation has improved in almost every release, as shown in the following graph.

The garbage collection has improved significantly from release to release as well. Today, the garbage collection does not have an impact on jank or app smoothness. The following graph shows the improvement we made in Android 10 for the collection of short-lived objects with generational concurrent collection. Improvements that are visible in the new release, Android 11, as well.

Throughput has increased substantially in GC benchmarks such as H2, by over 170%, and with real-world apps, such as Google Sheets, by 68%.

So how does that impact coding choices, such as whether to allocate objects using pools?

If you assume that garbage collection is inefficient and memory allocation is expensive you would assume that the less garbage you create, the less garbage collection will have to work. So, rather than creating new objects every time you use them, you maintain a pool of frequently used types and then get the objects from there? You therefore might implement something like this:

Pool<A> pool[] = new Pool<>[50];void foo() { A a = pool.acquire(); … pool.release(a);}

There are some code details skipped here but you define a pool in your code, acquire an object from the pool, and eventually release it.

To test this we implemented the microbenchmark to measure two things: the overhead of a standard allocation to retrieve an object from a pool, and the overhead of the CPU, to figure out if the garbage collection impacts the performance of the app.

In this case, we used a Pixel 2 XL with Android 10 running the allocation code thousands of times in a very tight loop. We also simulated different object sizes by adding extra fields, because performance might be different for small or large objects.

First, the results for object allocation overhead:

Secondly, the results for the CPU overhead for garbage collection :

You can see that the difference between standard allocation and pooling the object is minimal. However, when it comes to garbage collection for larger objects the pool solution gets slightly worse.

This behavior is actually what we expect from the garbage collection because by pooling objects you increase the memory footprint of your app. Suddenly, you hold on too much memory, and even if the number of garbage collection invocations is reduced because you are pooling objects, the cost of each garbage collection invocation is higher. This is because the garbage collector must traverse much more memory in order to decide what’s still alive and what should be collected.

So, is this myth busted? Not entirely. Whether object pools are more efficient depends on your app’s needs. First, do remember the disadvantage, aside from code complexity, of using pools:

- May have a higher memory footprint

- Risk keeping objects alive longer than needed

- Requires a very efficient pool implementation

However, the pool’s approach could be useful for large or expensive-to-allocate objects.

The key thing to remember is to test and measure before you choose your option.

Myth 5: Profiling my debuggable app is fine

Profiling your app while it’s debuggable would be really convenient, after all, you’re usually coding in debuggable mode. And, even if profiling in debuggable is a little inaccurate being able to iterate quicker should compensate. Unfortunately not.

To test this myth we looked at some benchmarks for common activity-related workflows. The results are in the following graph.

On some of the tests, such as deserialization, there is no impact. However, for others, there is a 50% or more regression on the benchmark. We even found examples that were 100% slower. This is because the runtime does very little optimization to your code when it’s debuggable so the code users run on production devices is very different.

The outcome of profiling in debuggable is that you can be misdirected to hotspots in your app and might waste time optimizing something that doesn’t need optimizing.

Stranger things

We’re going to move away from myth-busting now and turn our attention to some stranger things. These were things that weren’t really myths that we could bust. Rather they are things that may not be immediately obvious or easy to analyze but where the results might turn your world upside down.

Strangeness 1: Multidex: Does it affect my app performance?

APKs are getting larger and larger. They haven’t fitted into the constraints of the traditional dex spec for a while. Multidex is the solution you should be using if your code exceeds the method count limitation.

Question is, how many methods are too many? And, is there a performance impact if an app has a large number of dex files? This might not be because your app is too large, you might simply want to split the dex files based on features for easier development.

To explore any performance impact of multiple dex files we took the calculator app. By default, it is a single dex file app. We then split it into five dex files, based on its package boundary to simulate a split according to features.

We then tested several aspects of performance, beginning with startup time.

So splitting the dex file had no impact here. For other apps, there could be a slight overhead depending on several factors: how big the app is and how it’s been split. However, as long as you split the dex file reasonably and don’t add hundreds of them the impact on the startup time should be minimal.

What about APK size and memory?

As you can see there is a slight increase in both the APK size and in the app’s runtime memory footprint. This is because, when you split the app into multiple dex files, each dex file has some duplicated data for tables of symbols and caches.

However, you can minimize this increase by reducing the dependencies between the dex files. In our case, we made no effort to minimize it. If we had attempted to minimize the dependencies, we would have looked to the R8 and D8 tools. These tools automate the split of the dex files and help you avoid common pitfalls and minimize dependencies. For example, these tools won’t create more dex files than are needed and won’t put all the startup classes in the main file. However, if you do a custom split of the dex files, always measure whatever you’re breaking out.

Strangeness 2: Dead code

One of the benefits of using a runtime with a JIT compiler like ART is that the runtime can profile code and then optimize it. There is a theory that if code is not profiled by the interpreter/JIT system, it is probably not executed either. To test this theory we examined the ART profiles produced by Google app. We found that a significant portion of the app code does not get profiled by the ART interpreter-JIT system. This is an indication that a lot of code was actually never executed on devices.

There are several types of code that might not get profiled:

- error-handling code, which hopefully doesn’t get executed a lot.

- code for backward compatibility, code that doesn’t get executed on all devices, especially not an Android 5 or later device.

- code for infrequently used features.

However, the skew distributions we see is a strong indication that there might be a lot of unnecessary code in the apps.

The quick, easy, and free way to remove unnecessary code is to minify with R8. Next, if you haven’t done so already, to convert your app to use the Android App Bundle and Play Feature Delivery. They allow you to improve user experience by only installing features that get used.

Learnings

We’ve busted a number of myths about Android performance but also seen that, in some cases, things aren’t clear cut. It’s therefore critical to benchmark and measure before opting for complex optimizations or even small optimizations that break good coding practices.

There are plenty of tools that can help you measure and decide on what works best for your app. For example, Android Studio has profilers for native and non-native code, it even has profilers for battery and network use. There are tools that can dig deeper, such as Perfetto and Systrace. These tools can provide a very detailed view of what happens, for example, during app startup or a segment of your execution.

The Jetpack Benchmark library takes away all the complexity around measuring and benchmarking. We strongly encourage you to use it in your continuous integration to track performance and to see how your apps behave as you add more features to it. And last, but not least, do not profile in debug mode.

Java is a registered trademark of Oracle and/or its affiliates.

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!