Google is dedicated to building infrastructure that lets you modernize and run your workloads, and connect with more users, no matter where they are in the world. Part of this infrastructure is our extensive global network, which provides best-in-class connectivity to Google Cloud customers, and our edge network, which lets you connect with ISPs and end customers.

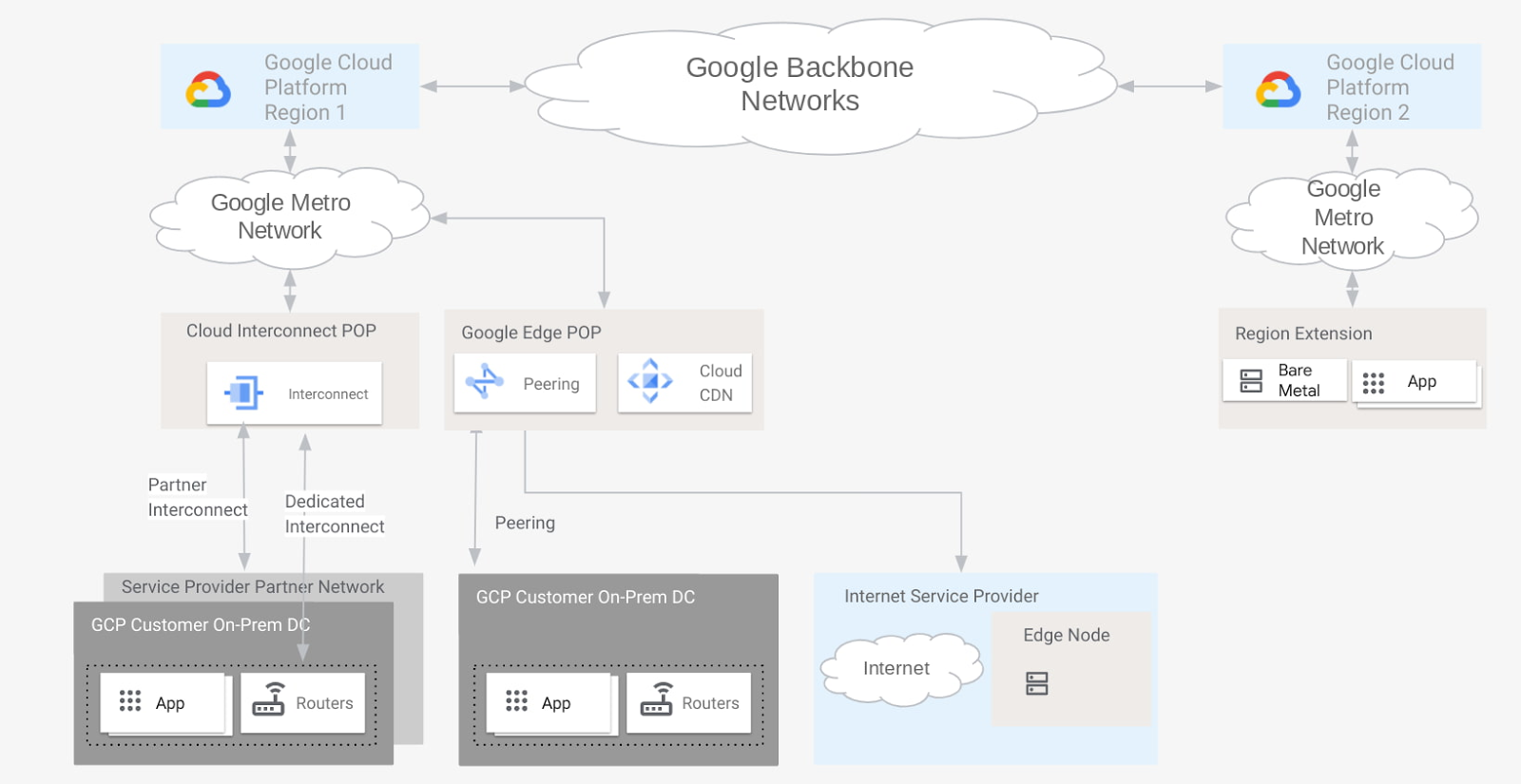

When it comes to choosing how you connect to Google Cloud, we provide a variety of flexible options that optimize performance and cost. But when it comes to the Google network edge, what constitutes an edge point? Depending on your requirements and connectivity preferences, your organization may view different demarcation points in our network as the “edge,” each of which performs traffic handoffs in their own way. For example, a telco customer might consider the edge to be where Google Global Caches (GGC) are located, rather than an edge point of presence (POP) where peering occurs.

From our partners:

In this blog post, we describe the various network points of presence within our edge, how they connect to Google Cloud, and how traffic handoffs occur. Armed with this information, you can make a more informed decision about how best to connect to Google Cloud.

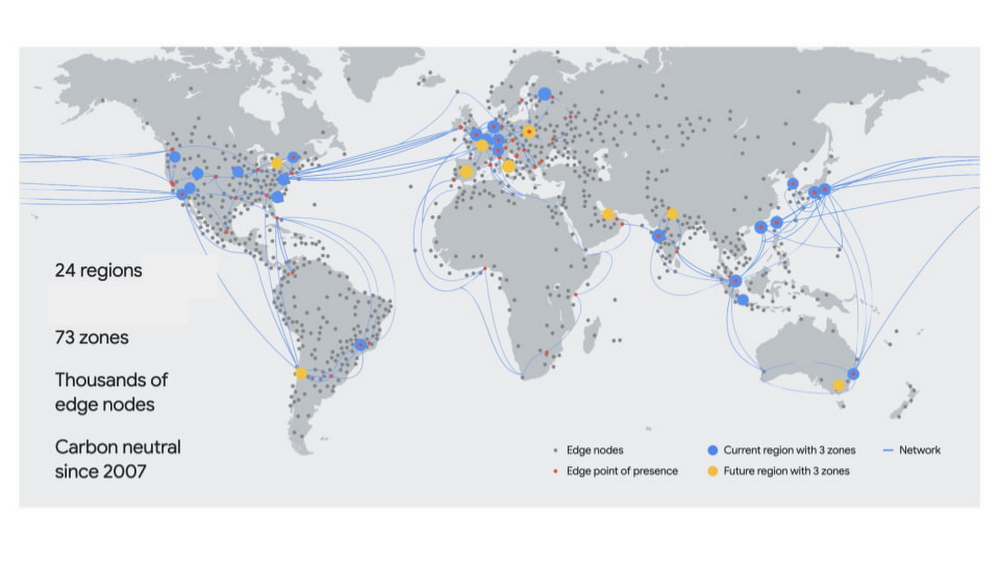

GCP regions and zones

The first thing to think about when considering your edge options is where your workloads run in Google Cloud. Google Cloud hosts compute resources in multiple locations worldwide, which comprise different regions and zones. A region includes data centers in a specific geographical location where you can host your resources. Regions have three or more zones. For example, the us-west1 region denotes a region on the west coast of the United States that has three zones: us-west1-a, us-west1-b, and us-west1-c.

Edge POPs

Our edge POPs are where we connect Google’s network to the Internet via peering. We’re present on over 180 internet exchanges and at over 160 interconnection facilities around the world. Google operates a large, global meshed network that connects our edge POPs to our data centers. By operating an extensive global network of interconnection points, we can bring Google traffic closer to our peers, thereby reducing their costs, latency, and providing end users with a better experience.

Google directly interconnects with all major Internet Service Providers (ISPs) and the vast majority of traffic from Google’s network to our customers is transmitted via direct interconnections with the client’s ISP.

Cloud CDN

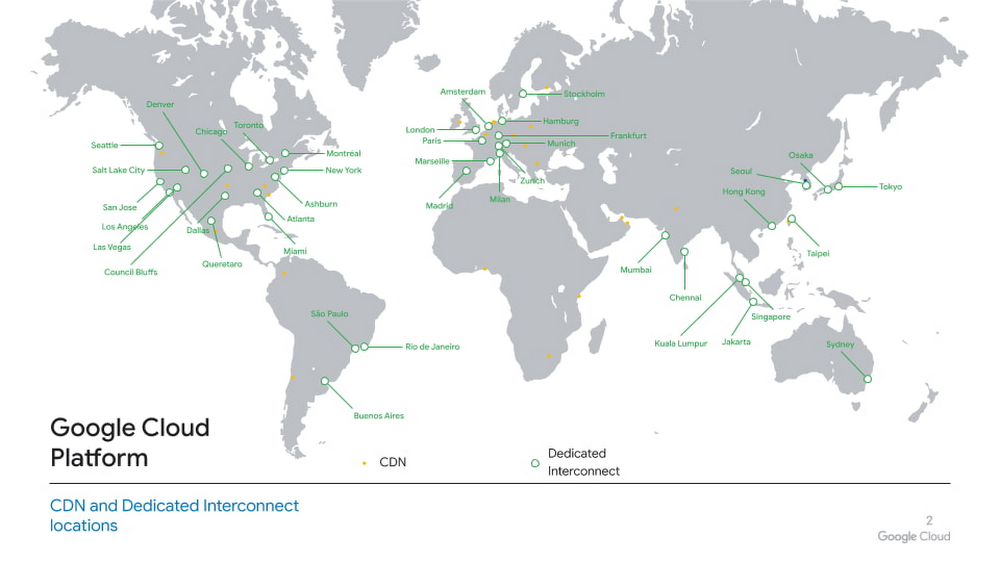

Cloud CDN (Content Delivery Network) uses Google’s globally distributed edge POPs to cache Cloud content close to end users. Cloud CDN relies on infrastructure at edge POPs that Google uses to cache content associated with its own web properties that serve billions of users. This approach brings Cloud content closer to customers and end users, and connects individual POPs into as many networks as possible. This reduces latency and ensures that we have capacity for large traffic spikes (for example, for streaming media events or holiday sales).

Cloud Interconnect POPs

Dedicated Interconnect provides direct physical connections between your on-premises network and Google’s network. Dedicated Interconnect enables you to efficiently transfer large amounts of data between networks. For Dedicated Interconnect, your network must physically meet Google’s network in a supported colocation facility, also known as an Interconnect connection location. This facility is where a vendor, the colocation facility provider, provisions a circuit between your network and a Google point of presence. You may also use Partner Interconnect to connect to Google through a supported service provider. Today, you can provision an Interconnect to Google Cloud in these 95+ locations.

Edge nodes, or Google Global Cache

Our edge nodes represent the tier of Google’s infrastructure closest to Google’s users, operating from over 1,300 cities in more than 200 countries and territories. With our edge nodes, network operators and ISPs host Google-supplied caches inside their network. Static content that’s popular with the host’s user base (such as YouTube and Google Play) is temporarily cached on these edge nodes, thus allowing users to retrieve this content from much closer to their location. This creates a better experience for users and reduces the host’s overall network capacity requirements.

Region extensions

For certain specialized workloads, such as Bare Metal Solution, Google hosts servers in colocation facilities close to GCP regions to provide low latency (typically <2ms) connectivity to workloads running on Google Cloud. These facilities are referred to as region extensions.

To the edge and back

An edge is in the eye of the beholder. Despite this already vast investment in infrastructure, network, and partnership, we believe that the journey towards the edge has just begun. As Google Cloud expands in reach and capabilities, the landscape of applications is evolving again, with traits such as critical reliability, ultra low latency, embedded AI, as well as tight integration and interoperability with 5G networks and beyond. We are looking forward to driving the future evolution of network edge as well as edge cloud capabilities. Stay tuned as we continue to roll out new edge sites, capabilities, and services.

We hope this post clarifies Google’s network edge offerings, and how they help connect your applications running in Google Cloud to your end users. For more about Google Cloud’s networking capabilities, check out these Google Cloud networking tutorials and solutions.

By Shweta Jain Product Manager, Google Cloud

Source Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!