Whether with the cloud or within their own data centers, enterprises have undergone a period of remarkable consolidation and centralization of their compute resources. But with the rise of ever more powerful mobile devices, and increasingly capable cellular networks, application architects are starting to think beyond the confines of the data center, and looking out to the edge.

What exactly do we mean by edge? Think of the edge as distributed compute happening on a wide variety of non-traditional devices — mobile phones of course, but also equipment sensors in factories, industrial equipment, or even temperature and reaction monitoring in a remote lab. Edge devices are also connected devices, and can communicate back to the mothership over wireless or cellular networks.

From our partners:

Equipped with increasingly powerful processors, these edge devices are being called upon to perform tasks that have thus far been outside the scope of traditional IT. For enterprises, this could mean pre-processing incoming telemetry in a vehicle, collecting video in kiosks at a mall, gathering quality control data with cameras in a warehouse, or delivering interactive media to retail stores. Enterprises are also relying on edge to ingest data from outposts or devices that have even more intermittent connectivity, e.g., oil rigs or farm equipment, filtering that data to improve quality, reducing it to right-size information load, and processing it in the cloud. New data and models are then pushed back to the edge; in addition, we can also push configuration, software, and media updates and decentralize processing workload.

Edge isn’t all about enabling new use cases – it’s also about right-sizing environments and improving resource utilization. For example, adopting an edge model can also relieve load on existing data centers.

But while edge computing is full of promise for enterprises, there are many pieces that are still works in progress. Further, developing edge workloads is very different from developing traditional applications, which enjoy the benefits of persistent data connections and run on well-resourced hardware platforms. As such, cloud architects are still in the early days of figuring out how to use and implement edge for their organizations.

Fortunately, there are tools you can use to help ease the transition to edge computing — and that likely fit into your organization’s existing computing systems. Kubernetes, of course, but also higher level management tools like Anthos, which provides a consistent control plane across cloud, private data center and edge locations. Other parts of the Anthos family – Anthos Config Management and Anthos Service Mesh — go one step further and provide consistent, centralized management to your edge and cloud deployments. And there’s more to come.

For the remainder of this blog post, we’ll dive deeper into the past and current state of edge computing, and the benefits that architects and developers can expect to see from edge computing. In a next post, we’ll take a deeper look at some of the challenges that designing for edge introduces, and some of the advantages the average enterprise has in adopting the edge model. Finally, we’ll look at the Google Cloud tools that are available today to help you build out your edge environment, and look at some early customer examples that highlight what’s possible today — and that will spark your imagination for what to do tomorrow.

The evolution of edge computing

The edge is not a new concept. In fact, it’s been around for the last two decades, spanning many use cases that are prevalent today. One of the first applications for edge was to use content delivery networks (CDN) to cache and serve daily static website pages near clients, for example, web servers in California data centers serving financial data to European customers.

As connectivity has improved and software evolved, the edge has evolved too, and the focus has shifted towards using edge to distribute services. First, simple services expanded from static HTML to javascript libraries or image repositories. Common functions like image transformation, credit and address validation support services followed. Soon, organizations were deploying more complex cloudlet and clustered microservices installations, as well as distributed and replicated datasets. The term “endpoint” became ubiquitous, and APIs profilerated.

In parallel, there’s been an explosion of creativity in hardware, microcontrollers and dedicated edge devices. Fit-for-purpose products were deployed globally. Services like Google Cloud IoT Core extended our ability to manage and securely connect these dispersed devices, allowing platform managers to register tools and leverage managed services like Pub/Sub and Dataflow for data ingestion. And with Kubernetes, large remote clusters — mini private clouds in and of themselves — operate as self-healing, autoscaling services across the broader internet, opening the door to new models for applications and architectural patterns. In short, both distributed asynchronous systems and economies have blossomed.

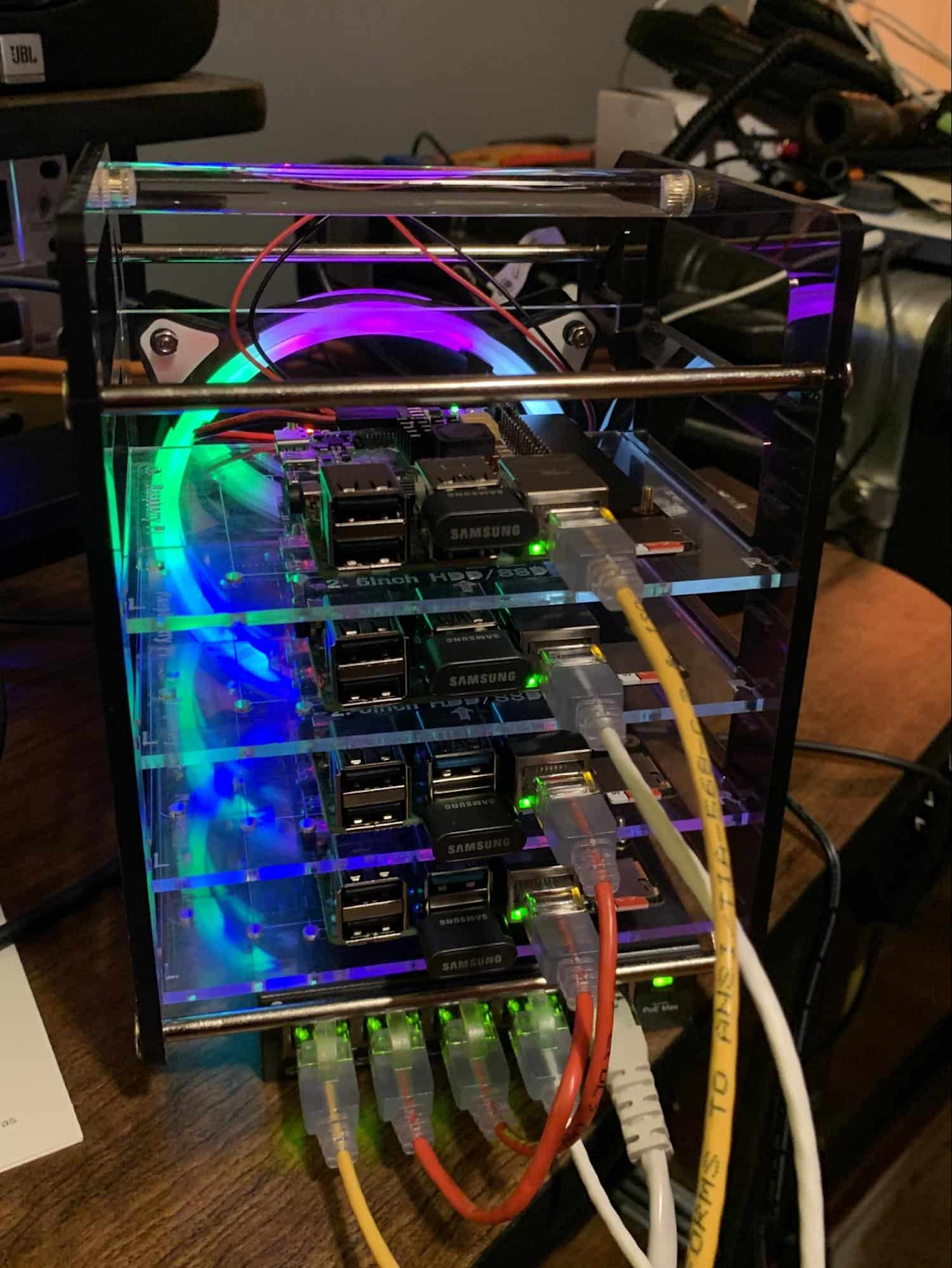

What does this mean for enterprises? For the purposes of this series, edge means you can now go beyond the corporate network, beyond cloud VPCs, and beyond hybrid. The modern edge is not sitting at a major remote data center, nor is it a CDN, cloud provider, or in a corporate data center rack — it’s just as likely to look like 100 of these attached to a thousand sensors.

Edge, in short, is about having hardware and devices installed at remote locations that can process and communicate back the information they collect and generate. The edge management challenge, meanwhile, is being able to push configuration and software/model/media updates to these remote locations when they are connected.

Enable new use cases

Today, we have reached a new threshold for edge computing — one where micro-data-processing centers are deployed as the edge of a fractal arm, as it were. Together, they form a broad, geographically distributed, always-on framework for streaming, collecting, processing and serving asynchronous data. This big, loosely coupled application system lives, breathes and grows. Always changing, always learning from the data it collects — and always pushing out updated models when the tendrils are connected.

Right now, the rise of 5G is pushing the limits of edge even further. Devices enabled with 5G can transmit using a mobile network — no ISP required — enabling connectivity anywhere within reach of a cell tower. Granted, these networks have lower bandwidth, but they are often more than adequate for certain types of data, for example fire sensors in forests bordering remote towns that emit temperature or carbon monoxide data periodically. Recently, Google Cloud partnered with AT&T to enhance business use of 5G edge technology but there is so much more that can be done.

Reduce data center investments

In addition to enabling the digitization of a broad range of new use cases, adopting edge can also benefit your existing data center.

Let’s face it: data centers are expensive to maintain. Moving some data center load to edge locations can reduce your data center infrastructure investment, as well as compute time spent there. Edge services tend to have much lower service level objectives (SLOs) than data center services, driving lower levels of hardware investment. Edge installations also tend to tolerate disconnectedness, and thus function perfectly well with lower SLOs — and lower costs.

Let’s look at an example of where edge can really reduce costs: big data. Back in the day, we used to build monolithic serial processors — state machines — that had to keep track of where they were in processing in case of failure. But time and again, we’ve seen that smaller, more distributed processing can break down big, expensive problems into smaller, more cost-effective chunks.

Starting with the explosion of MapReduce almost 20 years ago, big-data workloads were parallelized across clusters on a network, and state management was simplified with intermediate output to share, wait for, or restart processing from checkpoints. Those monolithic systems were replaced by cheaper, smarter, networked clusters and data repositories where parallel work could be executed and rendered into workable datasets.

Flash forward to today, and we are seeing those same concepts applied and distributed to edge data-collection points. In this evolution of big data processing, we are scaling up and out to the point where observation data is so massive that it must first be prefiltered, and then preprocessed down to a manageable size and still be actionable. Only then should it be written back to the main data repositories for more resource-intensive processing and model building.

In short, data collection, cleanup, and potentially initial aggregation happens at the edge location, which reduces the amount of junk data sitting in costly data stores. This increases performance of the core data warehouse, and reduces the size and cost of network transfers and storage!

The edge is a huge opportunity for today’s enterprises. But designing environments that can make effective use of the edge isn’t without its challenges. Stick around for part two of this series, where we look at some of the architectural challenges typically encountered while designing for the edge and how we begin to address them.

By Joshua Landman, Customer Engineer, Application Modernization Specialist, Google | Praveen Rajagopalan, Customer Engineer, Application Modernization Specialist, Google

Source Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!