In this blog post I highlight how you can accelerate your application modernization journey by taking advantage of Anthos on bare metal’s support for running on OpenStack. I also introduce the recently published “Deploy Anthos on bare metal on OpenStack” and “Configure the OpenStack Cloud Provider for Kubernetes” guides. I point to different starting points of these guides depending on expertise and OpenStack environment, so you can leverage it.

What is OpenStack?

OpenStack is an open source platform that enables you to manage large pools of compute, storage and networking resources. It provides you a uniform set of APIs and an interactive dashboard to manage and control your resources. If you have your own bare metal servers, you can install OpenStack in them and expose your hardware resources to others (other teams or outsiders) to provision VMs, Load Balancers and other compute services. You can think of OpenStack as the equivalent of the software that runs on top of the Google data centers enabling our users to provision compute resources and services via the Google Cloud Console.

From our partners:

Are you using OpenStack?

Many enterprises who were early to invest on acquiring their own hardware and networking equipment needed a uniform platform to manage their infrastructure. Whilst the computing resources were available, there had to be an easy way to manage and expose them to higher-level teams in an easily consumable way. OpenStack was one of the very few platforms that was available for enterprises as early as 2010 to help manage their infrastructure. All the complexity of scheduling, networking and storage in the bare metal environment were taken care of by OpenStack. Thus, multiple of our customers have been long term users of OpenStack to manage their infrastructure. If your enterprise is one that has already invested in your own bare metal and storage servers and networking equipment, then it is highly likely that you might be using OpenStack.

What is Anthos on Bare Metal?

Anthos is Google’s solution to help you manage your Kubernetes clusters running anywhere: Google Cloud, on-premises and other cloud providers. Anthos enables you to modernize your applications faster and establish consistency across all your environments. Anthos on bare metal is the flavor of Anthos that lets you run Anthos on physical servers managed and maintained by you. By doing this you can leverage your existing investments in hardware, platform and networking infrastructure to host Kubernetes clusters and take advantage of the benefits of Anthos-centralized management, increased flexibility, and developer agility — even for your most demanding applications. An important thing to notice is that when you hear “Anthos clusters” we are referring to Kubenertes clusters backed/managed by Anthos.

Can Anthos on Bare Metal make your OpenStack workloads cloud native?

The short answer is—Yes! It can.

We have learnt from our customers that their investments in their own data centers and large fleets of bare metal servers are quite important to them. It is important due to various reasons like data residency requirements, higher level of control over resources, not an easy expense to offset and existing talent who are well versed with the current environment. One or more of these reasons or even others may apply to you as well.

However, with the motion towards containerization and cloud native application development, we want to help you take advantage of the benefits of this application modernization drive whilst continuing to run applications in your OpenStack deployments. We built Anthos to just do that. Anthos on bare metal brings as much of Google Cloud as possible closer to your OpenStack deployment. It lets you run your workload in your well tuned OpenStack infrastructure whilst enabling you to continuously modernize your application stack with key features from Google Cloud – like service mesh, central configuration management, monitoring & alerting and more. Whilst OpenStack helps you manage your underlying compute resources, Anthos on bare metal will enable the management of Kubernetes clusters running in your existing infrastructure. To help you get started with this journey using Anthos on bare metal on OpenStack we have recently published two guides.

Install Anthos on Bare Metal on OpenStack

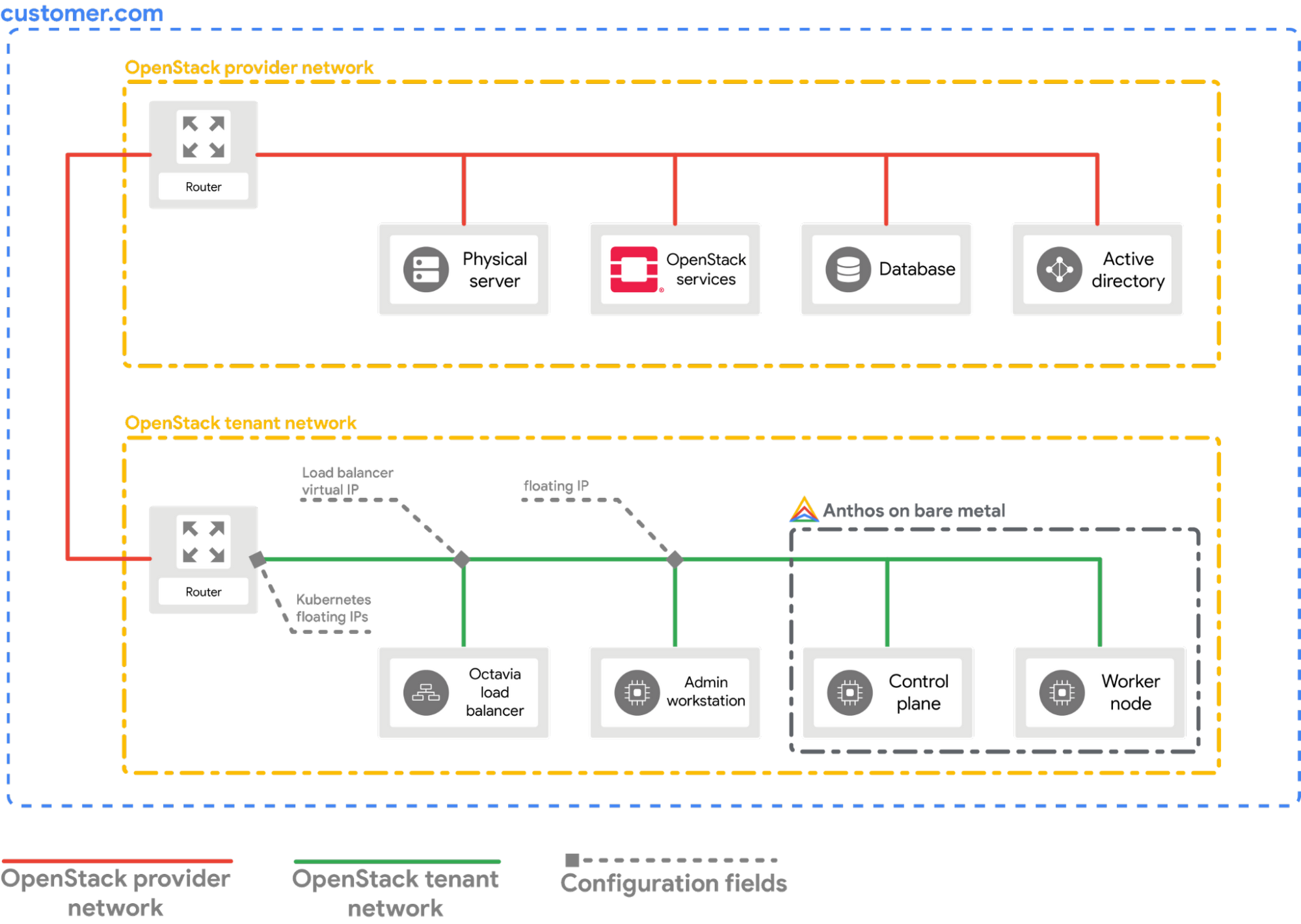

The recently published “Deploy Anthos on bare metal on OpenStack” guide takes you through the steps of how to install Anthos on bare metal in an existing OpenStack environment. The guide assumes that you have an OpenStack environment that is similar to the one shown in the following diagram. It guides you to install one hybrid cluster on 2 OpenStack virtual machines.

Notice that an OpenStack tenant network has been created which connects to a provider network of this OpenStack deployment. This tenant network will serve as the layer 2 network connection between the virtual machines that we will use to install Anthos on bare metal. This setup has 3 virtual machines:

- An admin workstation: this is the virtual machine from which we will carry out the installation process. As part of the installation of Anthos on bare metal, a bootstrap cluster is created. This cluster will be created in the admin workstation and be deleted once the installation is complete.

- A control plane node: this is the virtual machine that will run the control plane components of the Anthos cluster.

- A worker node: this is the virtual machine that will run the application workloads.

The control plane node and the worker node, both together make up the Anthos on bare metal cluster.

We also have provisioned an OpenStack Octavia Load Balancer on the same tenant network to which the virtual machines are attached to. The load balancer is also connected to the provider network via a router. This load balancer setup is important to the follow up part to this installation guide where we show how to “Configure the OpenStack Cloud Provider for Kubernetes“. By configuring the OpenStack cloud provider for Kubernetes in our Anthos cluster, we can expose our Kubernetes services outside the tenant network. With the OpenStack cloud provider configured, whenever you create a Kubernetes service of type LoadBalancer, the Octavia load balancer is used to assign an IP address to this service. This in turn makes the service reachable from the external provider network.

To make following these two guides easy, we have provided all the building blocks required in our public anthos-samples repository. Depending on how much of the setup you already have, you can start at any of the following four stages and continue till the end:

- You have no OpenStack environment to experiment with: start by following the “Deploy OpenStack Ussuri on GCE VM” guide to get an OpenStack environment running on a Google Computer Engine VM.

- You have your own OpenStack deployment but don’t have an environment configured as shown in the diagram earlier: start by following the “Provision the OpenStack VMs and network setup using Terraform” guide to configure a setup exactly as shown in the diagram. This guide automates the setting up process using Terraform. Thus, you can easily clean your environment once you are done.

- You have your own OpenStack deployment and have a setup similar to the diagram already configured: you can directly start following the “Deploy Anthos on bare metal on OpenStack” guide.

- You have your own OpenStack deployment, have a setup matching the diagram and have Anthos on bare metal installed: follow the “Configure the OpenStack Cloud Provider for Kubernetes“ guide to expose Kubernetes services outside the tenant network using the OpenStack Octavia Loan Balancer.

I recommend that if you have to start from the beginning and go through all the four stages, then following the “Getting started” guide in the GitHub repository is the best place. The steps in the repository does not assume you have an existing OpenStack environment and provides you with all the necessary details to be able to access your services running inside the Anthos cluster — inside the OpenStack virtual machine — inside OpenStack — inside the Google Compute Engine VM — inside Google Cloud. That’s a lot of layers! 🙂

By: Shabir Abdul Samadh (Developer Relations Engineer)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!