Sometimes, you need to run a piece of code for hours, days, or even weeks. Cloud Functions and Cloud Run are my default choices to run code. However, they both have limitations on how long a function or container can run. This rules out the idea of executing long-running code in a serverless way.

Thanks to Workflows and Compute Engine, you can have an almost serverless experience with long running code.

From our partners:

Here’s the idea:

- Containerize the long-running task, so it can run anywhere.

- Plan to run the container on a Compute Engine VM with no time limitations.

- Use Workflows to automate VM creation, running the container on the VM, and VM deletion.

With this approach, you simply execute the workflow and get back the result of the long-running task. The underlying lifecycle of the VM and running of the container are all abstracted away. This is almost serverless!

Let’s look at a concrete example.

Long-running task: Prime number generator

The long-running task for this example is a prime number generator. You can take a look at the source here.

The code implements a deliberately inefficient prime number generator and a simple web API defined in PrimeGenController.cs as follows:

/start: Starts calculating the largest prime./stop: Stops calculating the largest prime./: Returns the largest prime calculated so far.

There’s also a Dockerfile to run it as a container.

You can use gcloud to build and push the container imagine:

gcloud builds submit --tag gcr.io/$PROJECT_ID/primegen-service

The container will also need HTTP and port 80 for its web API. Add a firewall rule for it in your project:

gcloud compute firewall-rules create default-allow-http --allow tcp:80

Build the workflow

Let’s build the workflow to automate running the container on a Compute Engine VM. The full source is in prime-generator.yaml.

First, read in some arguments, such as the name of the VM to create and the number of seconds to run the VM. The workSeconds argument determines how long to execute the long-running container:

main:params: [args]steps:- init:assign:- projectId: ${sys.get_env("GOOGLE_CLOUD_PROJECT_ID")}- projectNumber: ${sys.get_env("GOOGLE_CLOUD_PROJECT_NUMBER")}- zone: "us-central1-a"- machineType: "c2-standard-4"- instanceName: ${args.instanceName}- workSeconds: ${args.workSeconds}

Next, create a container-optimized VM with an external IP and the right scopes to be able to run the container. Also specify the actual container image to run.

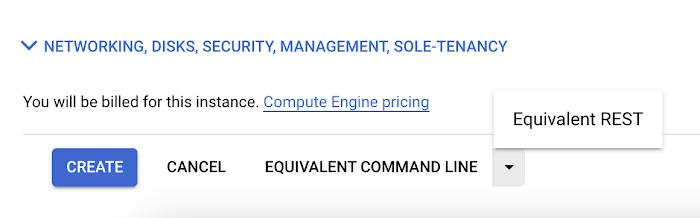

This is the trickiest part of the workflow. You need to figure out the exact parameters for the REST call you need for the Compute Engine VM. One trick is to create the VM manually from Google Cloud console and then click Equivalent REST to get the REST command with the right parameters you need to create the VM.

You can then convert that REST command into YAML for the Workflows Compute Engine connector. In the end, you will end up with something like this:

- create_and_start_vm:call: googleapis.compute.v1.instances.insertargs:project: ${projectId}zone: ${zone}body:tags:items:- http-servername: ${instanceName}machineType: ${"zones/" + zone + "/machineTypes/" + machineType}disks:- initializeParams:sourceImage: "projects/cos-cloud/global/images/cos-stable-93-16623-39-40"boot: trueautoDelete: true# Needed to make sure the VM has an external IPnetworkInterfaces:- accessConfigs:- name: "External NAT"networkTier: "PREMIUM"# The container to runmetadata:items:- key: "gce-container-declaration"value: '${"spec:\n containers:\n - name: primegen-service\n image: gcr.io/" + projectId + "/primegen-service\n stdin: false\n tty: false\n restartPolicy: Always\n"}'# Needed to be able to pull down and run the containerserviceAccounts:- email: ${projectNumber + "[email protected]"}scopes:- https://www.googleapis.com/auth/devstorage.read_only- https://www.googleapis.com/auth/logging.write- https://www.googleapis.com/auth/monitoring.write- https://www.googleapis.com/auth/servicecontrol- https://www.googleapis.com/auth/service.management.readonly- https://www.googleapis.com/auth/trace.append

Once the VM is created and running, you need to get the external IP of the service and build the start/stop/get URLs for the web API:

- get_instance:call: googleapis.compute.v1.instances.getargs:instance: ${instanceName}project: ${projectId}zone: ${zone}result: instance- extract_external_ip_and_construct_urls:assign:- external_ip: ${instance.networkInterfaces[0].accessConfigs[0].natIP}- base_url: ${"http://" + external_ip + "/"}- start_url: ${base_url + "start"}- stop_url: ${base_url + "stop"}

You can then start the prime number generation and wait for the end condition. In this case, Workflows simply waits for the specified number of seconds. However, the end condition could be based on polling an API in the container or a callback from the container.

- start_work:call: http.getargs:url: ${start_url}- wait_for_work:call: sys.sleepargs:seconds: ${int(workSeconds)}

When the sleep is done, stop the prime number generation and get the largest calculated prime:

- stop_work:call: http.getargs:url: ${stop_url}- get_result:call: http.getargs:url: ${base_url}result: final_result

Finally, delete the VM and return the result:

- delete_vm:call: googleapis.compute.v1.instances.deleteargs:instance: ${instanceName}project: ${projectId}zone: ${zone}- return_result:return: ${final_result.body}

Deploy and execute the workflow

Once you’ve built the workflow, you’re ready to deploy it:

WORKFLOW_NAME=prime-generator

gcloud workflows deploy $WORKFLOW_NAME --source=prime-generator.yaml

And then execute the workflow for one hour:

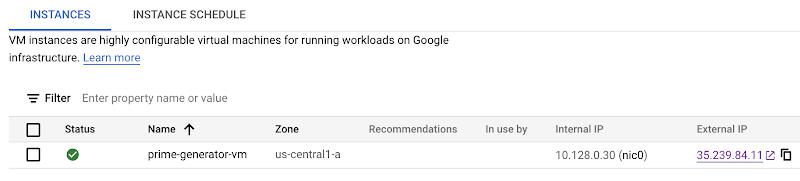

gcloud workflows run $WORKFLOW_NAME --data='{"instanceName":"prime-generator-vm", "workSeconds":"3600"}'

This creates a VM and starts the container.

When the time is up, the VM will be deleted and you will see the results of the calculation:

result: '"2836703"'startTime: '2022-01-24T14:02:34.857760501Z'state: SUCCEEDED

Though it’s not possible today to execute long-running code on Cloud Functions or Cloud Run, you can use Workflows to orchestrate a Compute Engine VM and have code running with no time limits. It’s almost serverless! As always, feel free to reach out to me on Twitter @meteatamel with questions or feedback.

By: Mete Atamel (Developer Advocate)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!