A retailer needs to predict product demand or sales, a call center manager wants to predict the call volume to hire more representatives, a hotel chain requires hotel occupancy predictions for next season, and a hospital needs to forecast bed occupancy. Vertex Forecast provides accurate forecasts for these, and many other business forecasting use cases.

From our partners:

Univariate vs multivariate datasets

Forecasting datasets come in many shapes and sizes.

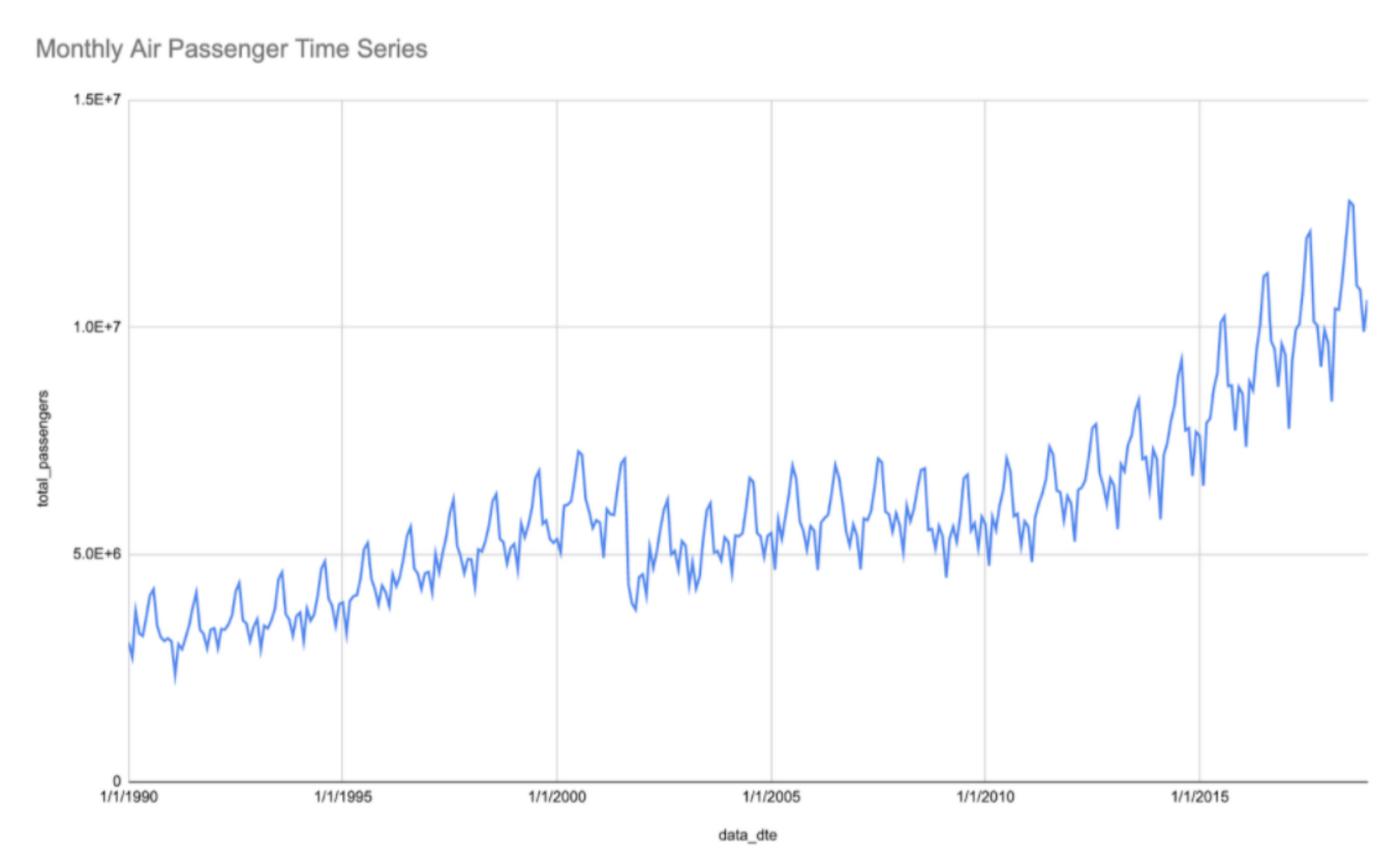

In univariate data sets a single variable is observed over a period of time. For example, the famous Airline Passenger dataset (Box and Jenkins (1976): Times Series Analysis: Forecasting and Control, p. 531), is a canonical example of a univariate time series data set. In the graph below, you can see an updated version of this time series that shows clear trend variations and seasonal patterns (source: US Department of Transportation).

More often, business forecasters are faced with the challenge of forecasting large groups of related time series at scale using multivariate datasets. A typical retail or supply chain demand planning team has to forecast demand for thousands of products across hundreds of locations or zip codes, leading to millions of individual forecasts. Infrastructure SRE teams have to forecast consumption or traffic for hundreds or thousands of compute instances and load balancing nodes. Similarly, financial planning teams often need to forecast revenue and cash flow from hundreds or thousands of individual customers and lines of business.

Forecasting algorithms

The most popular forecasting methods today are statistical models. Auto-Regressive Integrated Moving Average (ARIMA) models, for example, are widely used as a classical method for forecasting, BigQuery ML offers an advanced ARIMA+ model for forecasting use cases.

BQARIMA+ is perfect for univariate forecasting use cases; see this great tutorial on how to forecast a single time series from the Google Analytics public data set.

More recently, deep learning models have been gaining a lot of popularity for forecasting applications. For example the winners of the last M5 competition all used neural networks and ensembles. There is ongoing debate on when to apply which methods, but it’s becoming increasingly clear that neural networks are here to stay for forecasting applications.

Why use deep learning models for forecasting?

Deep learning’s recent success in the forecasting space is because they are Global Forecasting Models. Unlike univariate (i.e. local) forecasting models, for which a separate model is trained for each individual time series in a data set, a Deep Learning time series forecasting model can be trained simultaneously across a large data set of 100s or 1000s of unique time series. This allows the model to learn from correlations and metadata across related time series, such as demand for groups of related products or traffic to related websites or apps. While many types of ML models can be used as GFM, Deep Learning architectures, such as the ones used for Vertex Forecast, are also able to ingest different types of features, such as text data, categorical features, and covariates that are not known in the future. These capabilities make Vertex Forecast ideal for situations where there are very large and varying numbers of time series, and use cases like short lifecycle and cold-start forecasts.

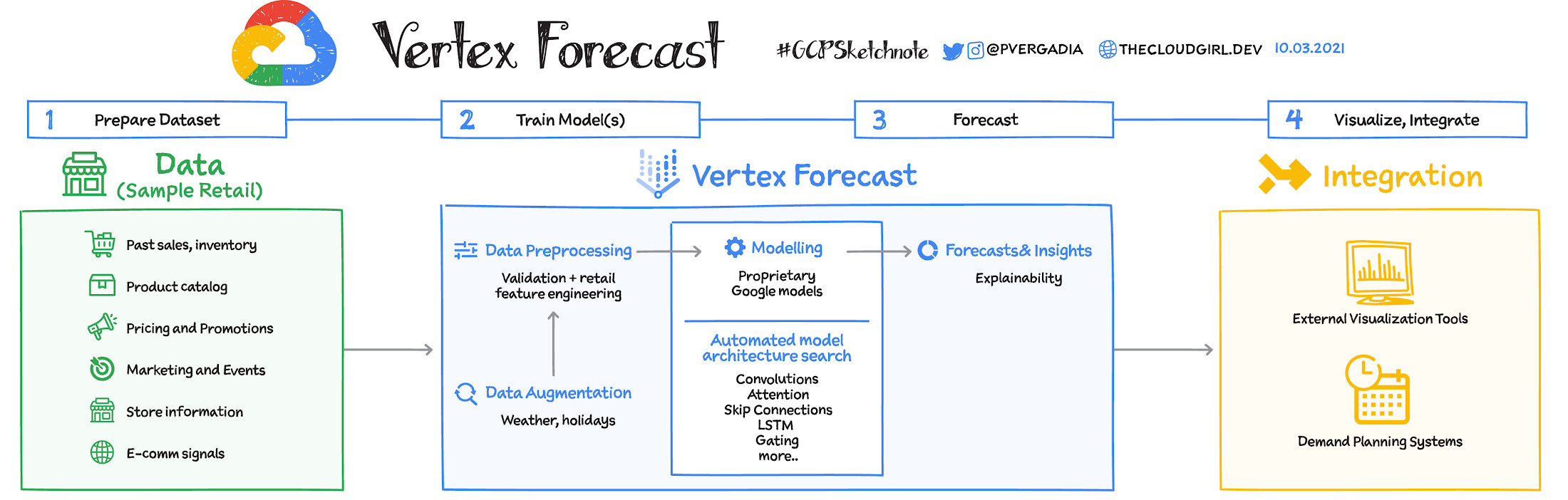

What is Vertex Forecast?

You can build forecasting models in Vertex Forecast using advanced AutoML algorithms for neural network architecture search. Vertex Forecast offers automated preprocessing of your time-series data, so instead of fumbling with data types and transformations you can just load your dataset into BigQuery or Vertex and AutoML will automatically apply common transformations and even engineer features required for modeling.

Most importantly it searches through a space of multiple Deep Learning layers and components, such as attention, dilated convolution, gating, and skip connections. It then evaluates hundreds of models in parallel to find the right architecture, or ensemble of architectures, for your particular dataset, using time series specific cross-validation and hyperparameter tuning techniques (generic automl tools are not suitable for time series model search and tuning purposes, because they induce leakage into the model selection process, leading to significant overfitting).

This process requires lots of computational resources, but the trials are run in parallel, dramatically reducing the total time needed to find the model architecture for your specific dataset. In fact, it typically takes less time than setting up traditional methods.

Best of all, by integrating Vertex Forecast with Vertex Workbench and Vertex Pipelines, you can significantly speed up the experimentation and deployment process of GFM forecasting capabilities, reducing the time required from months to just a few weeks, and quickly augmenting your forecasting capabilities from being able to process just basic time series inputs to complex unstructured and multimodal signals.

For a more in-depth look into Vertex Forecast check out this video.

For more #GCPSketchnote, follow the GitHub repo. For similar cloud content follow me on Twitter @pvergadia and keep an eye out on thecloudgirl.dev

By: Priyanka Vergadia (Developer Advocate, Google)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!