We’ve recently supported an organization who wanted to expose its Google Kubernetes Engine (GKE) backend behind Apigee X. A quite common architecture, which most of the users delivering modern web applications on Google Cloud tend to build upon.

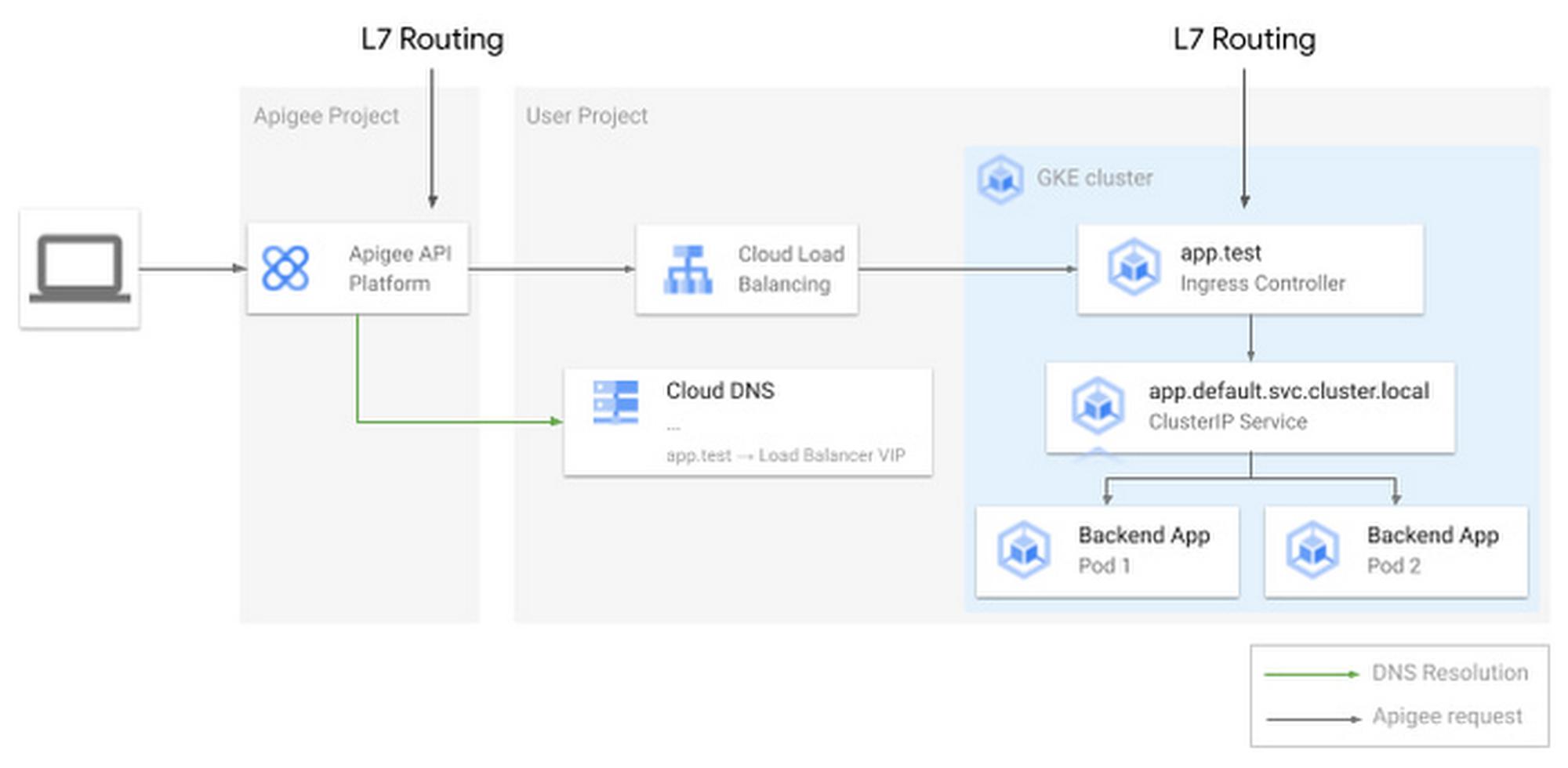

In this scenario, Google’s API gateway, Apigee, receives requests and performs L7 routing, redirecting you to the correct backend application, running as one or more pods on GKE.

From our partners:

Performing L7 routing in Apigee is not just advantageous, but it’s necessary. It is the job of the API gateway to route requests based on a combination of hostnames, URIs (and more), and applying authentication and authorization mechanisms through native policies.

When the organization asked how to expose GKE applications internally to Apigee, it was natural to recommend using Kubernetes ingress or gateways. These objects allow sharing the same GCP load balancer between multiple applications and perform L7 routing, so the requests are sent to the right Kubernetes pod.

Isn’t this fantastic? We allocate more than one load balancer per service, so companies spend less and avoid hitting limits, once they scale the infrastructure.

On the other hand, the system is performing L7 routing twice: once in Apigee and once in Kubernetes. This may increase latency and add management overhead. You will need to configure the mapping between matching hostnames and URIs and backends twice — once in Apigee and once in GKE.

Is there a way to avoid this? It turns out that a combination of recently released features in Google Cloud have the prerequisites to do the job.

What we describe in this article is currently only a proof of concept, so it should be carefully evaluated. Before describing the end-to-end solution, let’s discover each building block and its benefits.

VPC-native GKE clusters

Google Cloud recently introduced VPC-native GKE clusters. One of the interesting features about VPC native GKE clusters is that they use VPC IP alias ranges for pods and cluster IP services. While cluster IPs remain routable within the cluster only, pod IPs also become reachable from the other resources in your VPC (and from the interconnected infrastructure, like other VPCs or on-premises). Even if possible, clients shouldn’t reference pod IPs directly, as they are intrinsically dynamic. Kubernetes services are a much better alternative, as the Kubernetes DNS registers a well-known, structured record every time you create one.

Kubernetes headless services

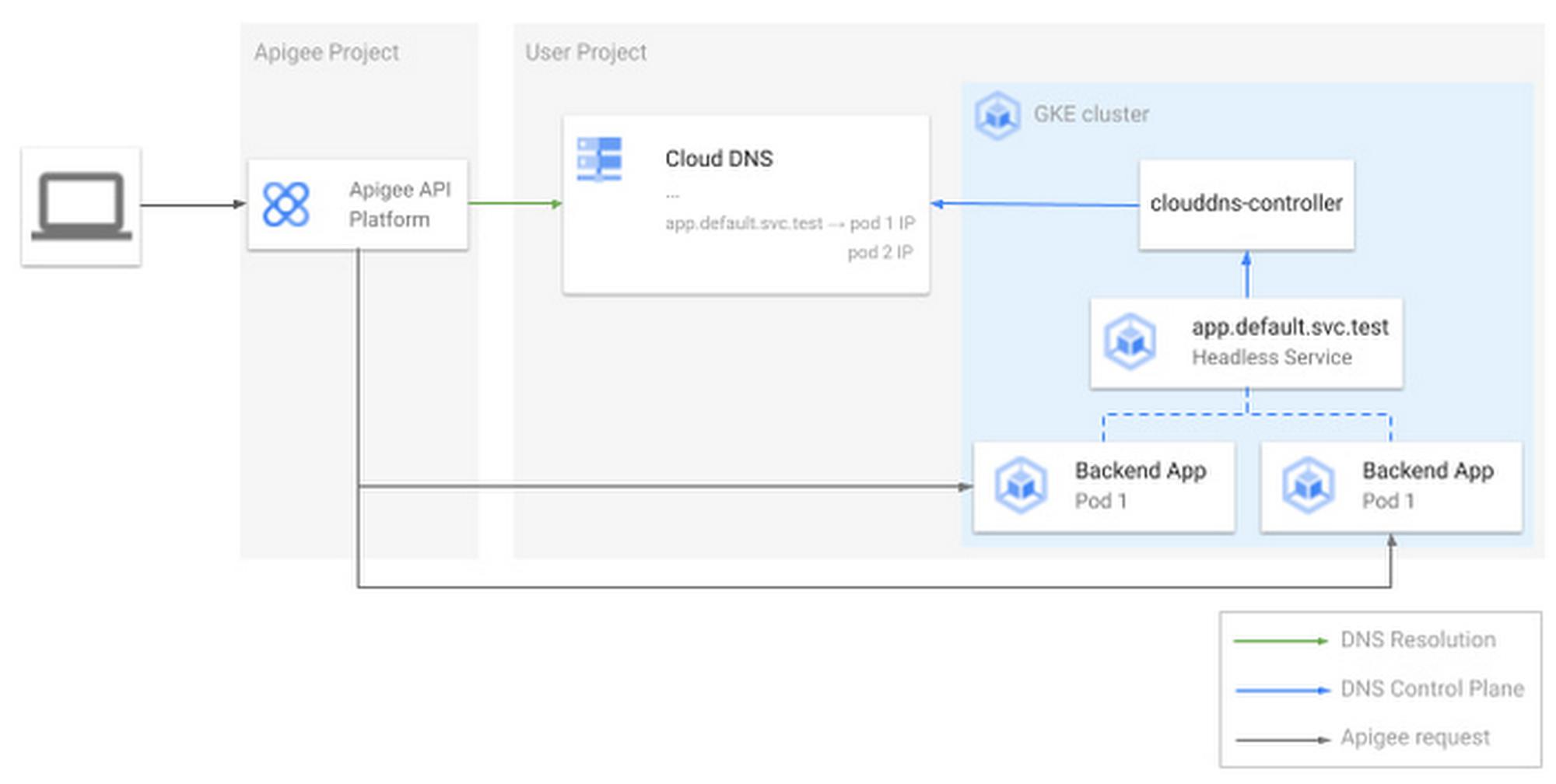

As introduced earlier, we need to create Kubernetes services (so DNS entries) that directly reference the pod IPs. This is exactly what Kubernetes headless services do — headless services reference pods just as any other Kubernetes service, but the cluster DNS binds the service DNS record and the pod IPs, instead of a dedicated service IP (such as ClusterIP services do). Now the question is how to make the internal Kubernetes DNS available also to external clients, so that they can query the headless service IP record and point exactly to the right pod IP (as the pod scales in and out).

GKE and Cloud DNS integration

GKE uses kube-dns as the default cluster Domain Name Service, but optionally, you can choose to integrate GKE with Cloud DNS. While this is normally done to circumvent kube-dns scaling limitations, it also turns out to be very useful for our use-case. Setting GKE and Cloud DNS with a VPC scope allows clients outside the cluster to directly query the entries registered by the cluster in Cloud DNS.

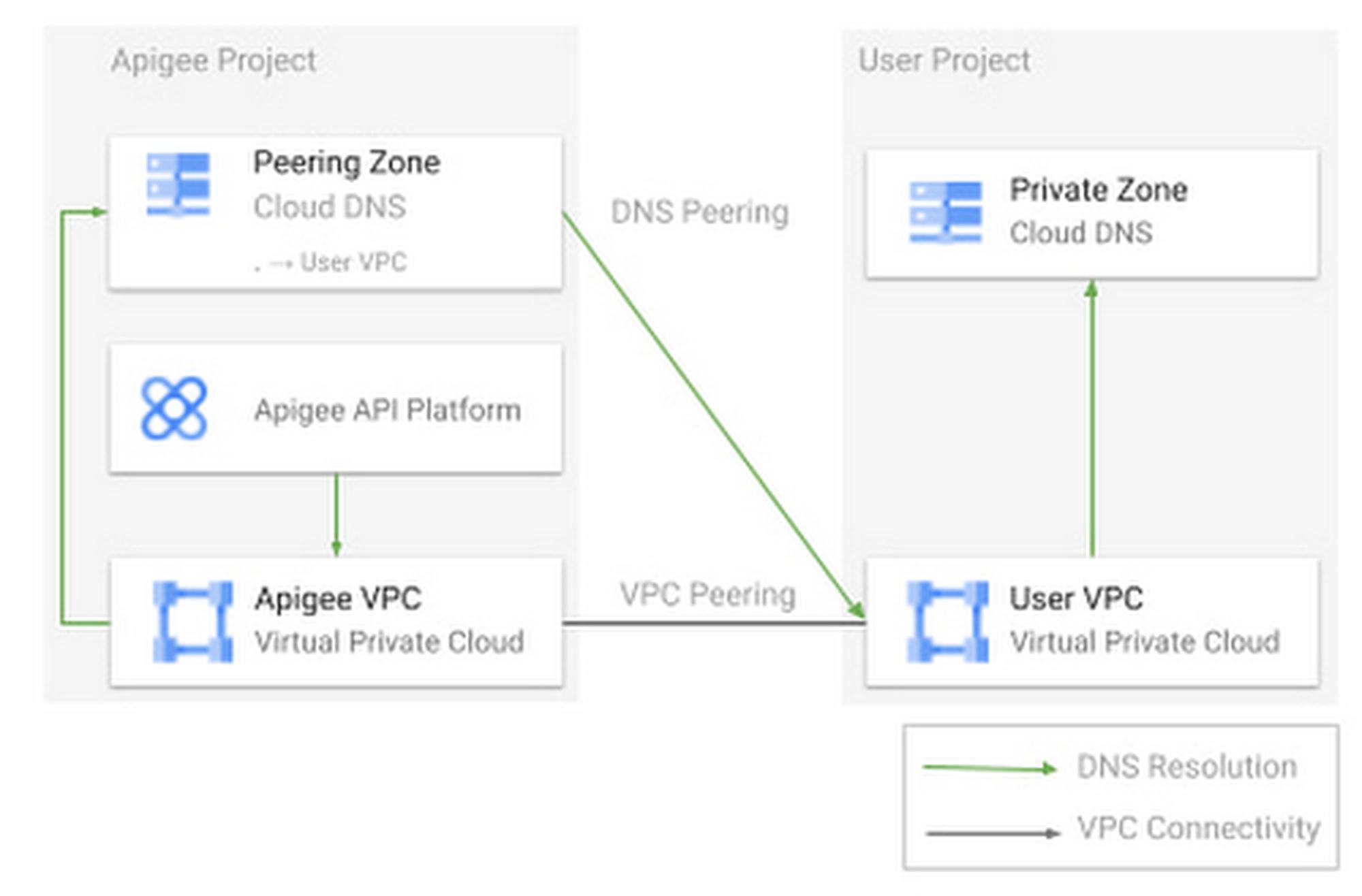

Apigee DNS peering

Apigee is the client that needs to communicate with the backend applications running on GKE. This means that, in the model we discussed above, it also needs to query the DNS entry to get in touch with the right pod. Living in a dedicated Google-managed project and VPC, Apigee needs to have DNS peering in place between its project and the user VPC. This way, it will gain visibility of the same DNS zones your VPC has visibility of, including the one managed by GKE. All of this can be easily achieved with a dedicated command.

Putting pieces together

Let’s summarize what we got:

- A VPC-native GKE cluster using Cloud DNS as its DNS service (configured in VPC scope)

- A backend application running on GKE (in form of one or more pods)

- A headless service pointing to the pod(s)

- Apigee configured to direct DNS queries to the user VPC

When a request comes in, Apigee reads the Target Endpoint value and queries Cloud DNS to get the IP of the application pod. Apigee reaches the pod directly, with no need for additional routing to be configured on the K8s cluster.

If you’re not interested in exposing secure backends to Apigee (using SSL/TLS certificates), you can stop reading here and go through the repository to give it a try.

Exposing secure backends

You may also want to encrypt the communication end-to-end, not only from the client, to Apigee, but also up to the GKE pod. This means that the corresponding backends will expose certificates to Apigee.

SSL/TLS offloading is one of the main tasks of ingress and gateway objects but this comes at the extra cost of maintaining an additional layer of software and defining L7 routing configurations in the cluster, which is exactly what we wanted to avoid and the reason why we came up with this proof of concept.

Fortunately, other well-established Kubernetes APIs and tools can help you to achieve this goal.

Cert-manager is a popular open source tool used to automate certificates lifecycle. Users can either create certificates from an internal Certificate Authority (CA) or request certificates from another CA outside the cluster. Through certificate objects and issuers, users can request SSL keypairs for pods running in the cluster and manage their renewal.

While using cert-manager alone would be sufficient to make pods expose SSL certificates, it would require you to attach certificates manually to the pods. This is just a repetitive action that can certainly be automated using MutatingAdmissionWebhooks.

To further demonstrate the viability of the solution, the second part of our exercise consisted in writing and deploying a Kubernetes mutating webhook. When you create a pod, the webhook automatically adds a sidecar container, running a reverse proxy that exposes the application TLS certificates (previously generated through cert-manager and mounted in the sidecar container as Kubernetes volumes).

Conclusions, limitations and next steps

In this article, we proposed a new way to connect Apigee and GKE backends, so that you won’t have to perform L7 routing in both components. We think this will likely help you to save time (managing way less configurations) and to reach better performances.

Collaborations are welcome. We really value your feedback and new ideas that may bring useful inputs to the project, so please give it a try. We released our demo as open source. You’ll learn more about GKE, Apigee, and all the tools and configurations we talked about above.

We’re definitely aware of some limitations and conscious of some good work that the community may benefit from, moving forward:

- When Cloud DNS is integrated with GKE, it sets for all records a default Time To Live (TTL) of 10 seconds. If you try to change this value manually, the Cloud DNS GKE controller will periodically override it, putting the default value back. Having high DNS values may cause clients to not be able to reach the pods that Kubernetes recently scaled. We’re working with the product team to understand if there is the chance to make this value configurable.

- On the other hand, using very low TTLs may largely increase the number of Cloud DNS queries, causing the increase of prices.

- We definitely look forward to adding support for other reverse proxies, such as Envoy or Apache HTTP Server. Contributions are always very welcome. In case you’d like to contribute but you don’t know where to start, don’t hesitate to contact us or to open an issue, directly in the repository

We believe this use case is not uncommon and as such we decided to jump on it and give it a spin. We don’t know how far this journey will bring us, but it has definitely been instructive and fun, and so we hope it will be for you too.

By: Federico Preli (Cloud Consultant, Google Cloud) and Luca Prete (Cloud Consultant, Google Cloud)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!