Security is a top priority for Google Cloud, and we protect our customers through how we design our infrastructure, our services, and how we work. Googlers created some of the fundamental components of containers, like cgroups, and we were an early adopter of containers for our internal systems. We realized we needed a way to increase the security of this technology. This led to the development of gVisor, a container security sandbox that we have since open sourced and integrated into multiple Google Cloud products. When a recent Linux kernel vulnerability was disclosed, users of these products were not affected because they were protected by gVisor.

From our partners:

The latest container escape

While auditing the 5.7 kernel release, an employee of Palo Alto Networks recently discovered a Linux kernel vulnerability, which has the potential to be used for “container escapes.” Containers share the same host kernel, which is one of the properties that allow them to be densely packed and highly portable. A container escape refers to a category of vulnerabilities seen in containerized systems where—typically through privilege escalation—an unauthorized user gains access to the host system, giving the attacker an entrypoint for whatever they’d like to do next, for example data exfiltration or cryptomining. (You can learn more container security fundamentals in this ebook, “Why Container Security Matters to Your Business.”)

This vulnerability, CVE-2020-14386, uses the CAP_NET_RAW capability of the Linux kernel to cause memory corruption, allowing an attacker to gain root access when they should not have. In Docker, the most commonly used container format with Kubernetes, the CAP_NET_RAW capability is enabled by default. This means that “out of the box,” your Kubernetes deployment—or the infrastructure of your serverless applications—could be compromised by this recent vulnerability. Even if your security team has told you to disable some of these default capabilities, CAP_NET_RAW is commonly used by networking tools such as ping and tcpdump, and may have been re-enabled for troubleshooting purposes!

Mitigating CVE-2020-14386 with gVisor

If you saw the Google Kubernetes Engine security bulletin, you may have noticed a line you hadn’t seen before: “Pods running in GKE Sandbox are not able to leverage this vulnerability.” If you’re a user of Cloud Run, Cloud Functions or App Engine standard environment, you are protected from this vulnerability as well, and will not have experienced any service disruptions or been issued patching instructions. All these platforms utilize gVisor to securely “sandbox” workloads, which protected users from this vulnerability.

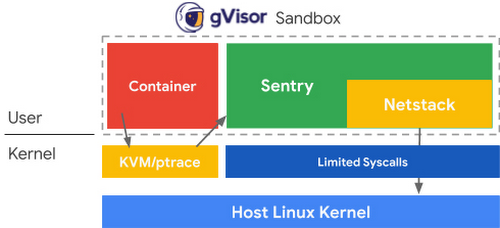

gVisor takes inspiration from a common principle in security that states that you should have multiple distinct layers of protection, and that those layers should not be susceptible to the same kinds of compromises. Containers rely on namespaces and cgroups as their primary layer of isolation; gVisor then introduces a second layer by handling syscalls through the Sentry (a kernel written in Go) that emulates Linux in userspace. This significantly reduces the number of syscalls allowed to reach the host kernel, and thereby reduces the attack surface. In addition to the isolation provided by the Sentry, gVisor uses a specific TCP/IP stack, Netstack, for yet another layer of protection.

In this case, the vulnerability is first hindered by having CAP_NET_RAW disabled by default. However, even if enabled, the vulnerability does not exist for gVisor: the problematic C code in Linux is not used in the gVisor networking stack. More importantly, this kind of attack—the exploitation of out-of-bounds array writes—is much less likely in the Sentry and its networking stack, thanks to the use of Go. You can read a technical deep dive on how gVisor mitigates this vulnerability here.

Making security a priority

Taking a step back, Linux is a fundamentally complex and evolving system, and security is thus an ongoing challenge. As a professor at UC Berkeley in 1996, I first worked on intercepting syscalls to improve Linux security and it remains an important approach. The Dune system later showed how to use virtualization hardware to intercept syscalls, leading essentially to a “virtual process” rather than a “virtual machine.” However, as with the earlier work, it then forwarded calls to the normal Linux kernel, and attackers could thus still reach the underlying kernel.

In contrast, gVisor actually implements the Linux syscalls directly in Go. Although it still makes some use of the underlying kernel, gVisor is never a direct passthrough of adversary-controlled data. In some sense gVisor is really a safe (small) version of Linux. Because Go is type- and memory-safe, huge classes of classic Linux problems, such as buffer overflows and out-of-bounds array writes, just disappear. The implementation is also orders-of-magnitude smaller, which further improves security.

However, the gVisor approach introduces tradeoffs, and there are currently downsides to picking this more secure path. The first downside is that gVisor will always have semantic differences from “real” Linux, although it is close enough to execute the vast majority of applications in practice. The rise of containers helps on this front, as it has led to less interest in distro specifics and more demand for portability. And Linux has done an incredible job on API stability, so the semantics are stable and well defined.

The second downside is that intercepting syscalls has performance overhead for workloads that are I/O intensive (based more on the number of calls than the amount of data). This will of course improve over time, but it is a factor for some applications. Many applications should prefer stronger security, but clearly not all do.

My hope is that Linux and the security community can get to a place where the user doesn’t have to sacrifice performance for security. To make this a reality, open-source communities are going to have to prioritize security in upstream design in the kernel and other core open-source projects. Efforts like the Open Source Security Foundation make me hopeful that we can solve this together.

Protecting your cloud-native applications

In the meantime, we’re committed to making the “secure” thing to do, the easy thing to do. At Google Cloud, we offer you the ability to use gVisor for your Google Kubernetes Engine (GKE) cluster with GKE Sandbox, and have built gVisor into the infrastructure that runs our serverless services App Engine, Cloud Run and Cloud Functions. In the case of GKE, added layers of defense are only clicks away, and for Cloud Run and App Engine, users get these added layers of protection without having to do anything!

If you’re running on GKE Sandbox, your pods are not affected by this vulnerability. However, as part of your security best practices, you should still upgrade to protect system containers that run on all nodes. If you are not a GKE Sandbox user, your first step is to upgrade your control plane and nodes to one of the versions listed in the GKE security bulletin, and then follow the recommendations for removing CAP_NET_RAW through Policy Controller, Gatekeeper, or PodSecurityPolicy.

Your next step is to enable GKE Sandbox. As a managed service, GKE Sandbox handles the internals of running open-source gVisor for you; there are no changes needed to your applications, and adding defense-in-depth to your pods is just a matter of a few clicks.

Whether your applications run in containers or serverlessly, get started with GKE or Google Cloud’s serverless solutions to get the security benefits of gVisor.

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!