Isaac Newton said, “If I have seen farther than others it is because I have stood on the shoulders of giants.” He meant that in order to explain the Law of Gravity, he used the work of major thinkers who came before him in order to make intellectual progress (as-in giving credit). In the 1640’s (yes, the time of the English Civil War and the start of the mini Ice Age), we can say the Age of Reason began and the word “data” was also (re)born in a way.

Why? Hard to assert a specific reason, it could be apropos of all those events of that decade, and somewhere amidst all that, the scientists and Churchmen (rather Churchman, Henry Hammond who really coined the term data) started to pen down their credit in books and there was a continued proliferation of data to reason their finding (and most times to reason why their work was better than that of the others). And thus comes to us the word datum from the Latin verb dare (it means “to give”, not the English dare).

From our partners:

Dare to Recap History?

Data is history captured through language (it has become the future as well, but that is for another day). Now we all like history (well, most of us). But it is highly likely the context gets lost in the complexity and style of definition. One way to mitigate that risk is to have a clear set of definitions (language), sustained hold of events (history), a clean process of capture (extract) and a scalable process for translation and aggregation (transform). If we want our data to be successful and rise to the occasion, then we need to keep these ways to mitigate the risk of complexity in mind.

And this is exactly what we discussed in the Part 1 of this blog series, Data Modeling Basics—the various business attributes, technical aspects, design questions, and considerations for designing your database model.

In this blog…

We will look into the different databases and storage options in Google Cloud, a brief note on each one of them, when to choose one over the other, interesting alternatives, exceptions and if you make it to the end of the blog, a fun challenge to make sure we put this little tech nugget to an ACID test (see what I did there?). If you are a cloud enthusiast, a database practitioner, a data geek, or a general wonderer of life with computing, you may find this engaging…

Google Cloud Storage Options

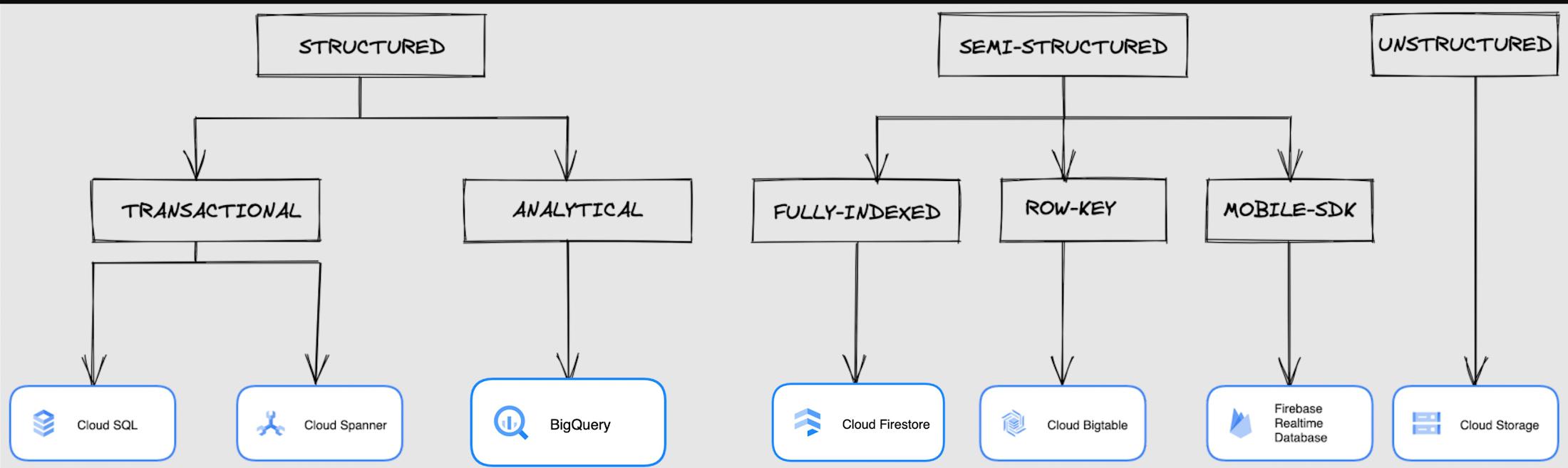

We at Google Cloud, have realized how hard it is to go through these laundry list assessment aspects and have made it simpler for you with a Decision Tree. (Of course, It ain’t Christmas if not for the tree):

If only the world was always “Structured”

In a structured world, you will know all the attributes on a first-name basis (I mean to say that you will have a well defined fixed set of attributes that can be modeled in a table of rows and columns), and the applications are transactional or analytical in orientation. Transactional Structured Data operate one row at a time generally and they need to adhere to ACID compliance. (Ah. Now you connect the dots, if not already.) ACID properties are Atomicity, Consistency, Isolation, and Durability. Cloud SQL and Cloud Spanner are our Google Cloud choices for Transactional Structured Data use cases.

Let’s look at the below aspects for each type and structure of data:

- Why that option? (highlights and key features)

- When to choose?

- When not to choose?

- Security aspects

Cloud SQL

- Fully Managed, cloud-native RDBMS (Relational DataBase Management System) that offers both MySQL, PostgreSQL, SQL Server engines

- Cloud SQL is accessible from apps running on App Engine, GKE, or Compute Engine

Note: A managed database is one that does not require as much administration and operational support (creating databases, performing backups, updating the operating system of database instances) as an unmanaged database.

When to use Cloud SQL?

- Typical online transaction processing (OLTP) workloads

- Lift and shift of on-premise SQL databases (or from anywhere else) to cloud

- Regional applications that do not need to store > 30 TB of data in a single instance

When not to use Cloud SQL?

Cloud SQL is not an appropriate storage system for online analytical processing (OLAP) workloads or data that requires dynamic schemas on a per-object basis.

Security

Data stored is encrypted both in transit and at rest. Have built-in support for access control, using network firewalls to manage database access.

Cloud Spanner

- Relational, horizontally scalable, global database with strong consistency

- Supports schemas, ACID transactions, and SQL queries (ANSI 2011)

- Scales horizontally in regions, but can also scale across regions for workloads that have more stringent availability requirements

When to use Cloud Spanner?

- For large amounts of data and when you require high transactional consistency

- When you require sharding for higher throughput, access and low latency

When not to use Cloud Spanner?

Cloud Spanner is not an appropriate storage system for online analytical processing (OLAP) workloads

Security

Security features in Spanner include data-layer encryption, audit logging, and Identity and Access Management (IAM) integration.

Analytical Structure is when we want the data to tell us an aggregated or enhanced story, for which we use limited columns and multiple rows and hence mostly use a Column-Oriented storage mechanism. Column-oriented storage is if we want to store the data in the tables by columns instead of by rows, and this column-oriented storage is done to efficiently access only a subset of columns for querying. BigQuery is the data warehouse option for analytics needs.

BigQuery

- BigQuery is a fully managed Data Warehouse for analytics with built-in data transfer service

- Peta-byte scale, low-cost warehouse that supports loading data through the web interface, command line tools, and REST API calls

- Incorporates features for machine learning, business intelligence, and geospatial analysis that are provided through BigQuery ML, BI Engine, and GIS.

Note: A data warehouse stores large quantities of data for query and analysis instead of transactional processing.

When to use BigQuery?

For use cases that cover process analytics and optimization, big data (Petabyte scale) processing and analytics, data warehouse modernization, machine learning-based behavioral analytics, and predictions

When not to use BigQuery?

BigQuery is not a Transactional database and is oriented on running analytical queries, not for simple CRUD operations and queries.

Security

BigQuery provides encryption at rest and in transit. Cloud Data Loss Prevention (Cloud DLP) can be used to scan the BigQuery tables and to protect sensitive data and meet compliance requirements. BigQuery supports access control of datasets and tables using Identity and Access Management (IAM).

And then we have the Semi-structured and the Unstructured world of data that we will address in the below sections.

Cloud Firestore (Cloud Datastore)

Firestore is the next major version of Datastore and a re-branding of the product. Taking the best of Datastore and the Firebase Realtime Database, Firestore is a NoSQL document database built for automatic scaling, high performance, and ease of application development.

- A fully managed, serverless NoSQL Google Cloud database designed for the development of serverless apps that stores JSON data

- Can be used to store, sync, and query data for web, mobile, and IoT applications

- Automatically handles sharding and replication making it highly available, durable, and scalable

- Provides ACID transactions, SQL-like queries, indexes, and more

- If a client does not have network connectivity, the Firestore API lets your app persist data to a local disk and synchronizes itself with the current server state once connectivity is reestablished

When to use?

For use cases of app development, live synchronization, offline support, multi-user collaborative applications, leader board, etc.

When not to use?

Not a relational database so not meant for relational structured data use cases.

Security

Firestore Security Rules support serverless authentication and authorization for the mobile and web client libraries. Identity and Access Management (IAM) manages database access.

Cloud Bigtable

- Bigtable is a wide-column, fully managed, high-performance NoSQL database service designed for terabyte- to petabyte-scale workloads

- Bigtable is battle tested on Google internal Bigtable database infrastructure that powers Google Search, Google Analytics, Google Maps, and Gmail

- Provides consistent, low-latency, and high-throughput storage for large-scale NoSQL data

When to use?

- For large amounts of single key data and is preferable for low-latency, high throughput workloads

- For real-time app serving workloads and large-scale analytical workloads

When not to use?

While Bigtable is considered an OLTP system, it doesn’t support multi-row transactions, SQL queries or joins. For those use cases, consider either Cloud SQL or Datastore.

Security

- All the data at rest in Cloud Bigtable is encrypted using Google’s default encryption, by default.

- Instead of Google managing the encryption keys that protect your data, your Bigtable instance can also be protected using a key that you manage (customer-managed encryption keys (CMEK)) in Cloud Key Management Service (Cloud KMS).

Cloud Storage

- Google Cloud Storage is an object storage system that is durable and highly available, persists unstructured data like images, videos, data files, videos, backup, and other data

- It is unstructured and so the files in the cloud storage are atomic that you read the entire file but you cannot access specific blocks in the files

- Cloud Storage is available in multiple classes, depending on the availability and performance required for apps and services

- Standard – Offers the highest levels of availability and is appropriate for storing data that requires low-latency access

- Nearline – Low-cost, highly durable, fast-access storage service for storing data that you access less than once per month

- Coldline – Very-low-cost, highly durable, fast-access storage service for storing data that you intend to access less than once per quarter

- Archive – Lowest-cost, highly durable, fast-access storage service for storing data that you intend to access less than once per year

Security

Files in Cloud Storage are organized by project into individual buckets. These buckets can support either custom access control lists (ACLs) or centralized identity and access management (IAM) controls.

Firebase Realtime Database

- Firebase is a realtime, NoSQL, Google Cloud database that is a part of the Firebase platform that allows you to store and sync data in real-time and includes caching capabilities for offline use

- Data is stored as JSON and synchronized in real-time to every connected client and remains available when app goes offline

When to use?

For mobile and web app development, development of apps that work across devices

When not to use?

Not in relational dataset use cases. The Realtime Database is a NoSQL database and as such has different optimizations and functionality compared to a relational database. The Realtime Database API is designed to only allow operations that can be executed quickly.

Security

The Realtime Database provides a flexible, expression-based rules language, called Firebase Realtime Database Security Rules, to define how your data should be structured and when data can be read from or written to. When integrated with Firebase Authentication, developers can define who has access to what data, and how they can access it.

That’s a rather packed read. But I hope you find this useful to understand comprehensively the basics of data, storage options and databases in Google Cloud Platform.

Next Steps, before I go…

In the blog part 1 of the series, I ended with an action item – “How would you model a NoSQL solution for an application that needs to query the lineage between individual entities that are represented in pairs?”.

Well, my answer is Firestore. As part of this episode, why don’t you take some time to go over the options and key aspects that attribute to this.

By: Abirami Sukumaran (Developer Relations Engineer)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!