When you provision workloads in the cloud to serve an application, having a load balancer (LB) at the front end of the application or service is strongly recommended. Load Balancers redirect user application requests to various backends (Instance groups, Network Endpoint Groups , Cloud Storage etc) that have the capacity to serve the request.

Load balancing in Google Cloud is a fully scalable, distributed and redundant managed service offered in different flavors such as global external, regional external, and regional internal. To learn what cloud load balancing is, refer to this blog post.

From our partners:

Here, we’ll focus on global external HTTP(S) load balancing. There are two modes of this:

- Global external HTTP(S) load balancer. This managed service is built on Google Front Ends (GFEs). It is the latest version of the global HTTP(S) external load balancer that uses the open-source Envoy proxy to support advanced traffic management capabilities such as traffic mirroring, weight-based traffic splitting, and request/response-based header transformations. To learn more refer to the External HTTP(S) LB with Advanced Traffic Management (Envoy) Codelab

- Global external HTTP(S) load balancer (classic). This managed service is built on Google Front Ends (GFEs). This is global with the premium network service tier and regional with the standard network service tier (the difference between premium and standard tiers will be discussed further in this blog) .

As mentioned above the global external HTTP(S) load balancer is the newer version of the HTTP(S) external load balancer with advanced traffic management. However, from a design point of view, it is recommended to identify the targeted use case and the required features before you decide which option to choose. For more information about the supported load balancing features, refer to the “Load balancer features” and “External HTTP(S) Load Balancing use cases” documents. This blog discusses these two modes of Google Cloud global external HTTP(S) load balancing.

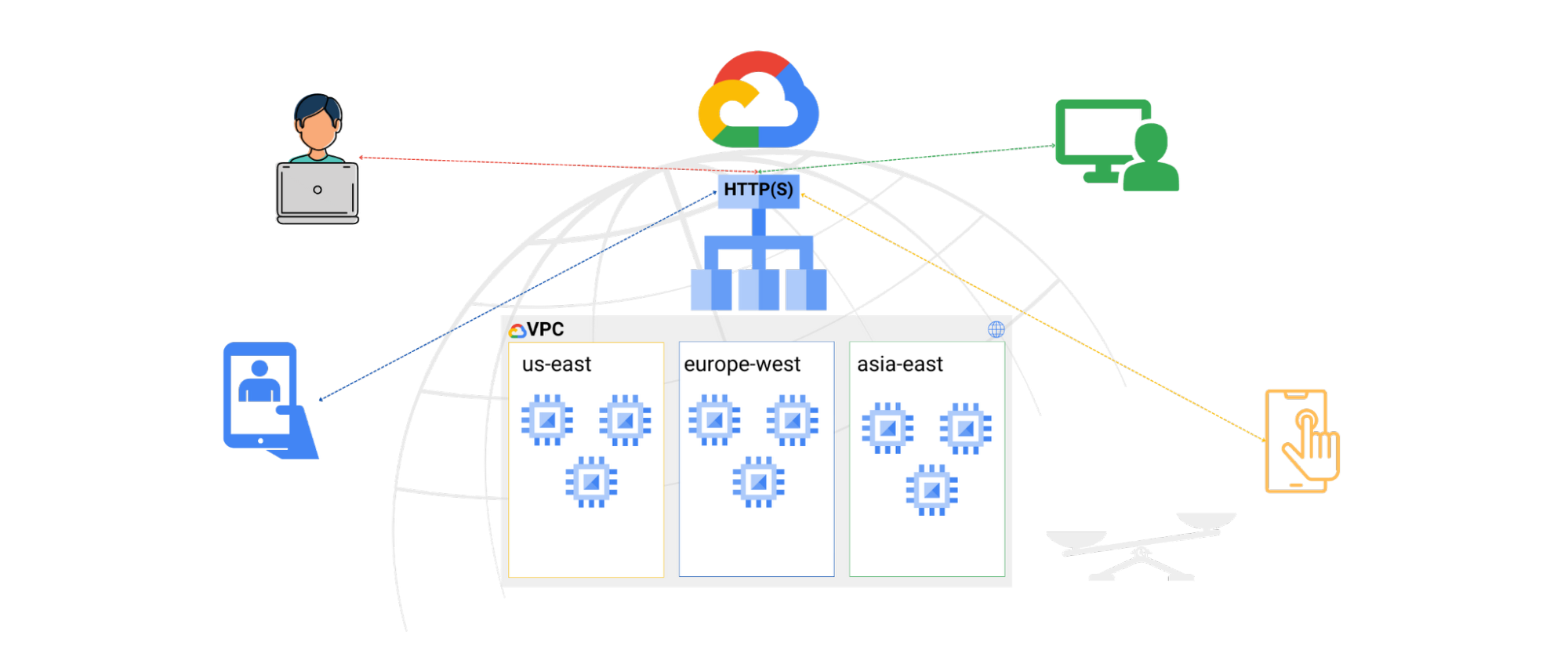

First let’s analyze the following key drivers to consider from an architecture point of view. Figure 1 below illustrates the high level architecture of Global external HTTP(S) load balancer & Global external HTTP(S) load balancer classic

Note: the below architecture is also applicable to the Global external HTTP(S) load balancer (classic) when it’s deployed with a premium network service tier, more details about it covered later in this blog.

Enhanced Performance: It provides the ability to inject traffic destined to applications hosted behind the Google Cloud global load balancer to enter Google’s reliable global network infrastructure from the closest point to the client/end user (enabled by premium network service tier) which helps to reduce the latency between client and backend server(s). Also, by distributing the load to backend instances based on predefined policies and health check metrics, to redirect traffic to instances that have the capacity to handle the request it will ultimately optimize the overall performance. Furthermore, by enabling content delivery capability, you will add additional performance optimization by caching static content such as images and videos at the Google edge locations (cached and served closer to the end user).

Optimized Security: it acts as the first entry point to the application or service and terminates the client connection at the Google edge locations. Traffic is inspected against network layer DDoS attacks and application layer attacks before being forwarded to the backend. This is an optimal approach that mitigates such attacks before even reaching the backend systems. Additional security can be added by using Google Cloud Armor for application layer security and Identity-Aware Proxy to help you establish a central authorization layer for applications accessed by HTTPS. These capabilities are key enablers of defense-in-depth to secure your cloud environment.

Resiliency: the ability to provide auto healing to re-launch instances that fail health check

metrics, as well as, redirecting traffic to backend instances (in the same or a different region) following a failure scenario, will typically increase the overall solution resiliency.

Flexibility: Provides a flexible hybrid architecture to extend cloud load balancing capability to backends residing either on-prem or in other clouds. This is a key enabler for different hybrid strategies. Such architectures might be driven by short-term migration from legacy (on-prem) solutions to a modern cloud-based solution, or could be a long-term architecture decision to enable certain capabilities or meet specific compliance requirements.

Operational Simplicity: because it’s a managed service, you don’t need to worry about building any infrastructure or scale it during peak times, which makes it a serverless capability to utilize at global level. Also, with Google external global HTTP(S) load balancer (in premium tier) the single Anycast IP is used on the frontend, and can be distributed globally. This eliminates the need to deploy a load balancer per region, or use a layer of DNS solution and policies to redirect traffic at global and regional level.

The question that you might be asking yourself is: how does the Google Cloud global HTTP(S) load balancer provide such architectural benefits?

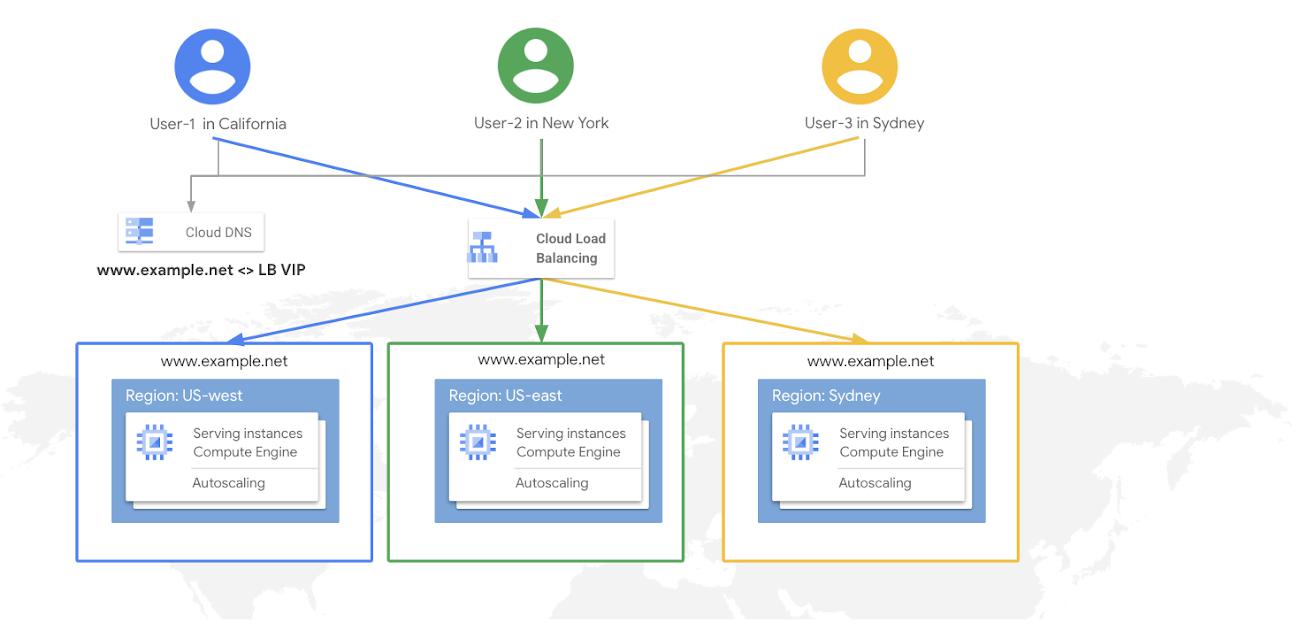

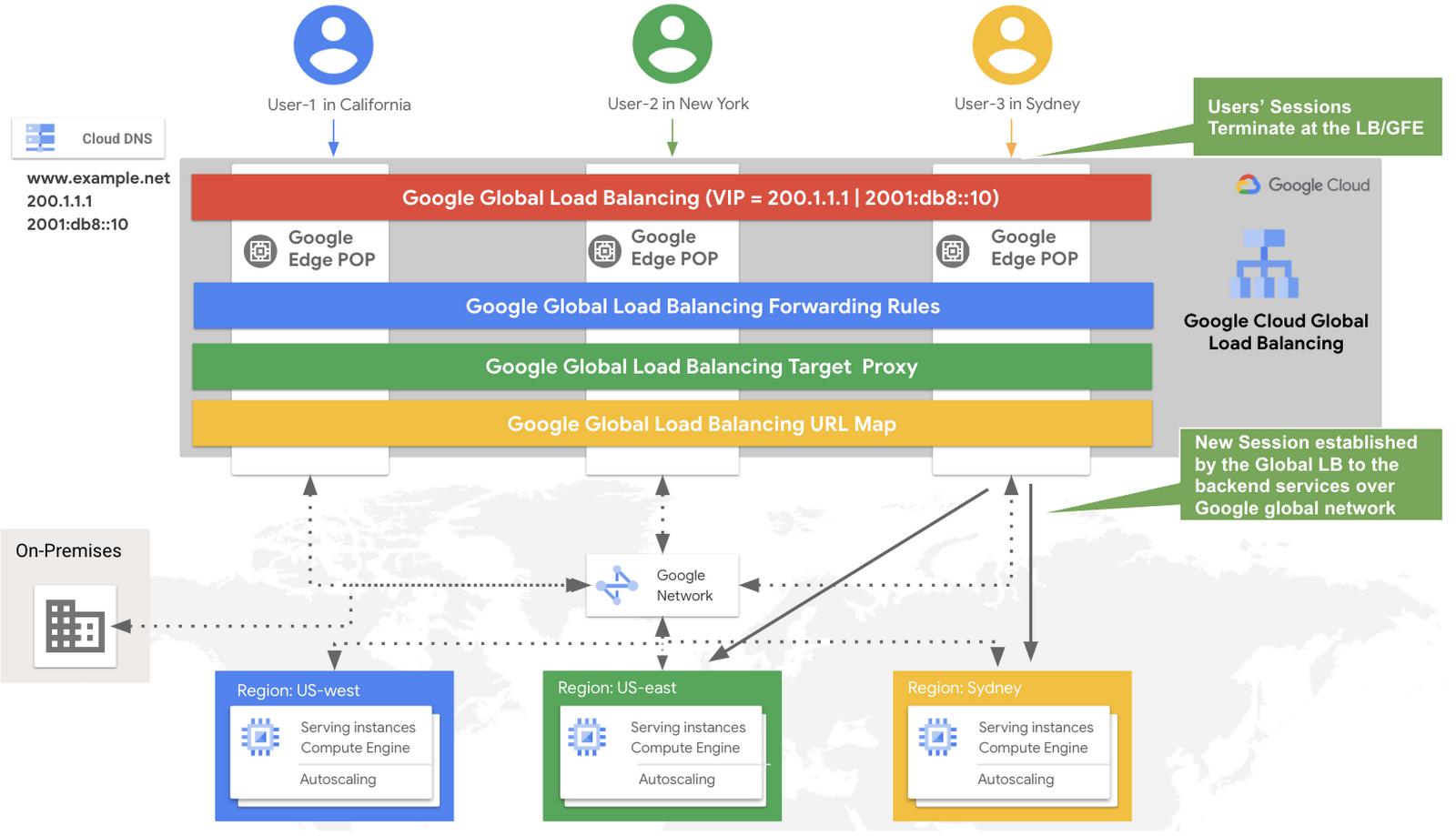

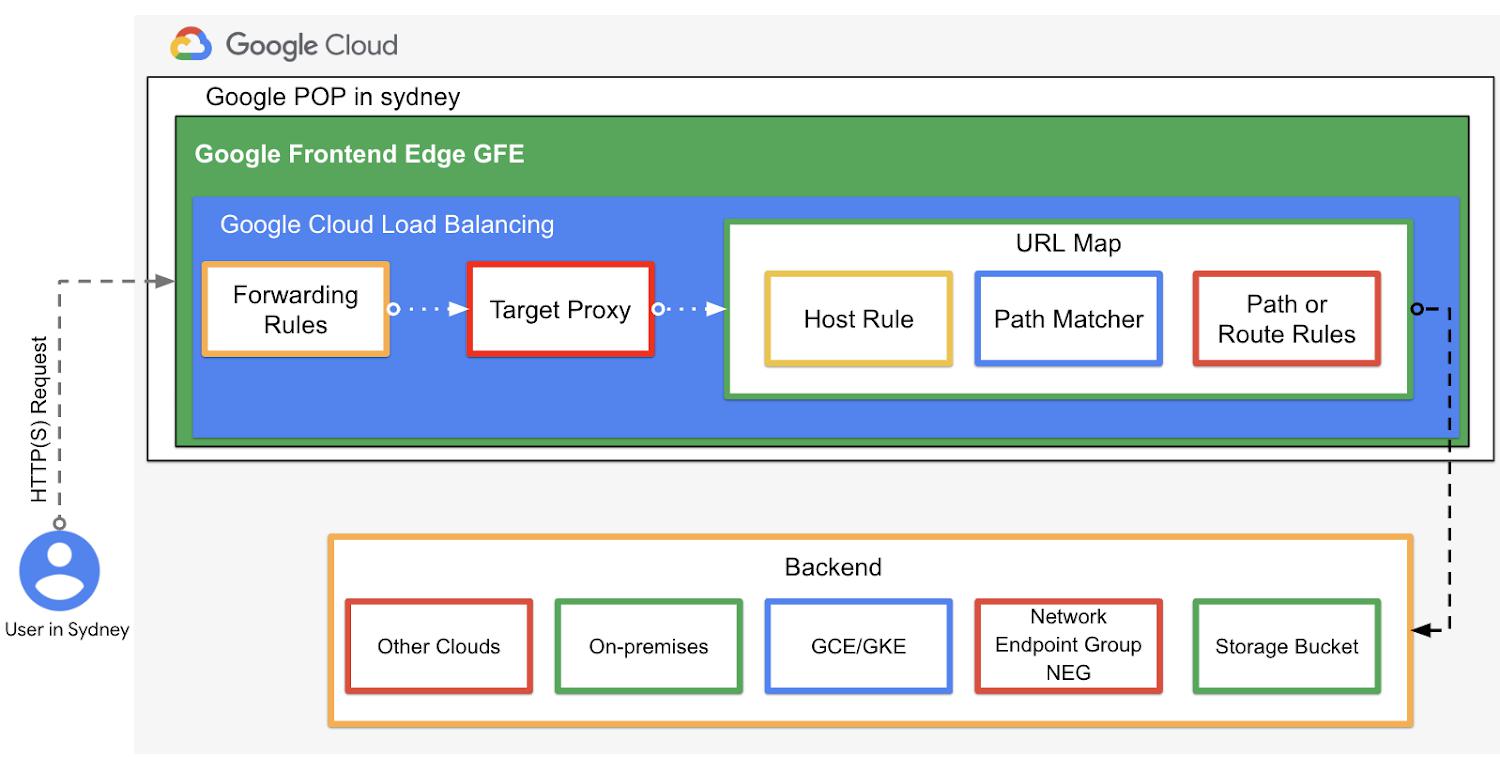

To simplify the answer, we need to analyze the architecture components of the Google Cloud global HTTP(S) load balancer, illustrated in figure 2 below. This high level architecture applies to both modes, except when standard tier is used with the Global external HTTP(S) load balancer (classic), which is discussed later in this blog.

- Software defined load balancing: Google Cloud global load balancing is not hardware-based. Instead, it is a fully distributed, software-defined solution offered as a managed service. External load balancers reside on Google Front Ends (GFEs). GFEs are distributed globally and located in Google points of presence (PoPs). They perform global load balancing in conjunction with other systems and control planes. The GFE capabilities is key in such architecture as it ensures that all secure HTTP connections are terminated (as close to the client as possible) with correct certificates and by following best practices such as supporting perfect forward secrecy. Also the GFE applies protections against DoS attacks at the edge layer (POPs) of Google global network.

- Google global network: is a highly provisioned, low-latency network. It’s the same network that powers highly scalable products like Gmail, Google Search, and YouTube. Google Cloud global load balancing is built on the same front-end serving infrastructure (GFEs). In addition, Google subsea cables play a key role in this global network, as it interconnects cloud infrastructure that includes more than 100 network edge locations (or POPs). It offers the ability to ingest user traffic into Google backbone as close as possible to the source of the traffic request, which provides an enhanced user experience.

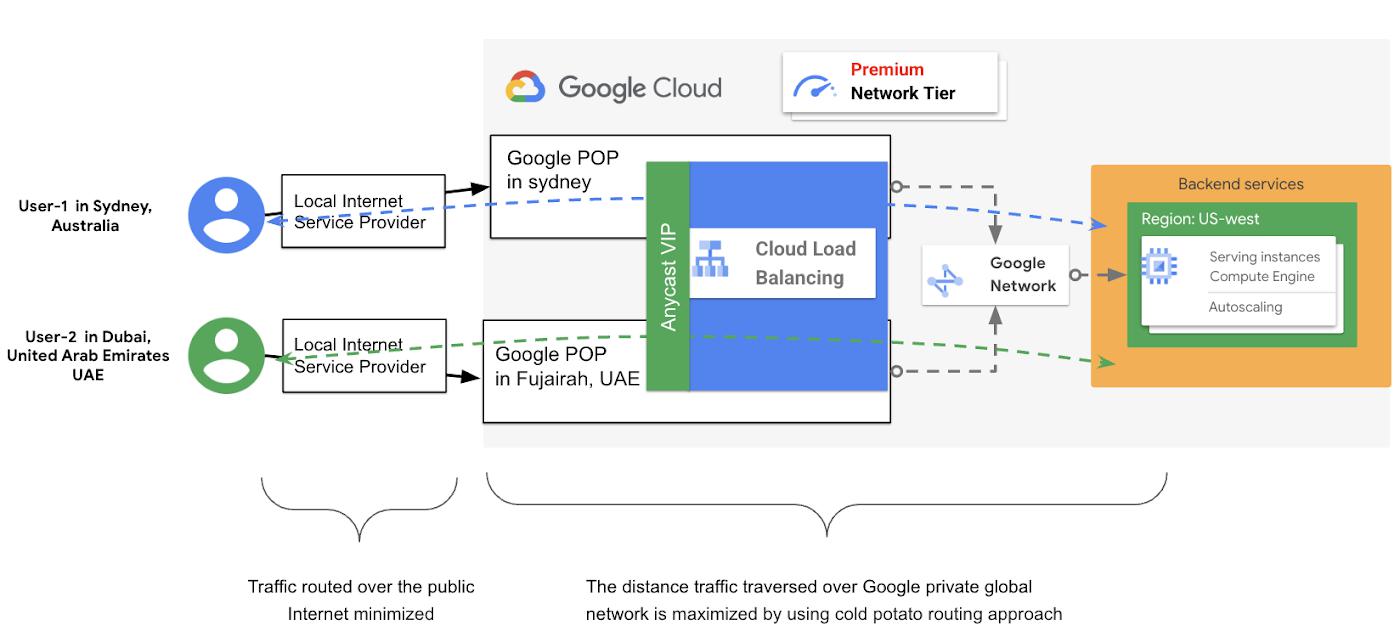

Such connectivity is referred to as the Premium Network Service Tier which follows the ‘cold potato routing’ approach that maximizes the distance traversed over Google fast & reliable private global network, as illustrated in figure 3. This is more efficient than routing the traffic end to end over the public internet, where typically the local ISP will pass the traffic off to another ISP (almost always traffic goes over multiple ISPs to reach its destination). As a result, traffic passing over multiple ISPs and networking hops, face higher latency and bandwidth constraints across the path.

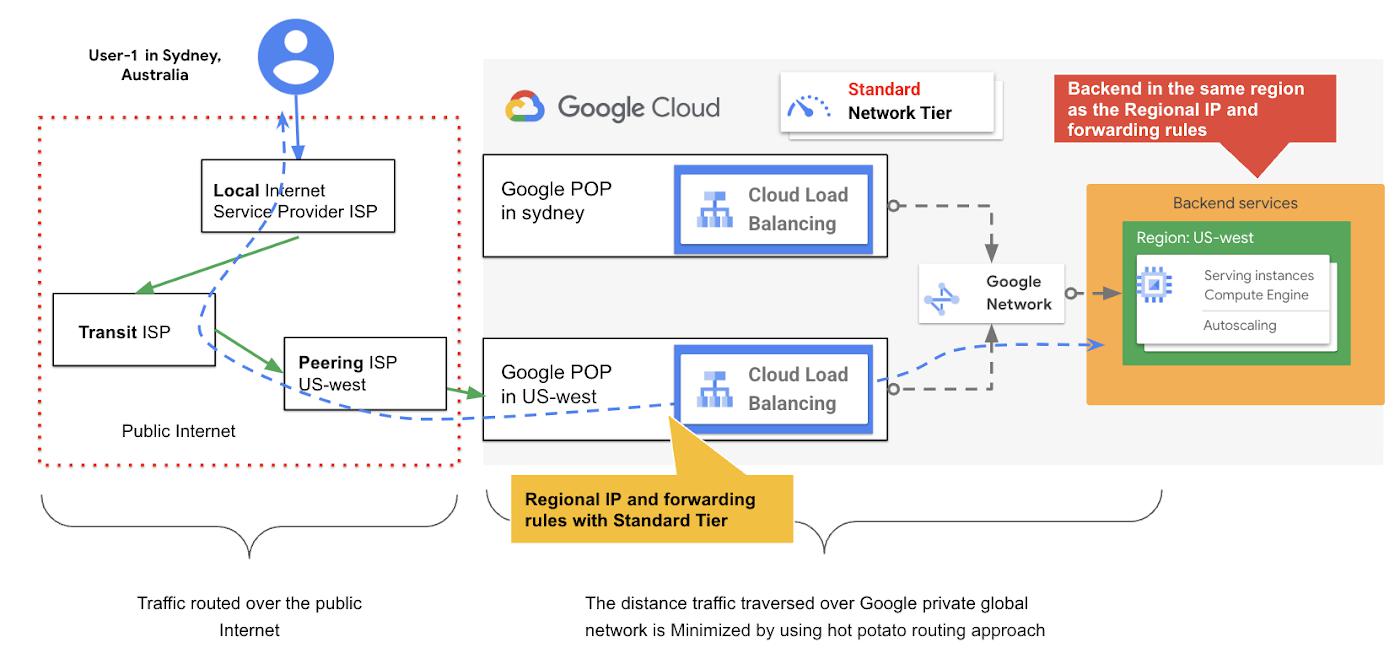

With the Google Cloud global external load balancer (classic) you have the option to choose either Premium Tier to operate as described above with a single Anycast virtual IP VIP. You can also choose Standard Tier where the global external load balancer (classic) will operate at a regional level in which there will be an IP and forwarding rule per region and the backends need to be in the same region of the regional IP and forwarding rule as illustrated in figure 4.

In contrast to the premium tier, the Standard Tier traffic routing is based on the hot potato routing approach in which outbound traffic from backend instances exist on Google’s network from the region’s Internet peering even if the destination is in another region, as illustrated in figure 4. With the Standard Tier, traffic is routed over the internet, possibly over multiple ISPs to reach the destination IP that might be in a different region. Therefore, it is priced lower than the Premium Tier, and can be used in certain use cases where latency is less of a concern. Or maybe, all systems and expected users are located in the same region, for more info refer to this blog. Therefore, It is important to decide which Tier to select as it will impact the overall architecture and its capabilities. For more info refer to this network service tier decision tree.

- Global (external) forwarding rules: the global forwarding rules are distributed and aplied at the Google front end (GFE). These provide a single global Anycast IP, which can be an IPv4 or IPv6 address when using Global external HTTP(S) load balancer & Global external HTTP(S) load balancer (classic) in Premium Tier. These are registered at the GFEs, which can be used in DNS records for your site, application or a backend bucket without the need to worry about an IP and DNS per region for globally distributed solutions. However , In case of using global external HTTP(S) load balancing (classic) in Standard Tier, then the forwarding rules will operate at a regional level, and the backend needs to reside in the same region that contains the forwarding rule as shown in figure 4.

- URL map: after the HTTP(S) request destined to a specific Anycast or regional VIP, it lands at the Google edge frontend, then the load balancer, need to decide where to route the request (to a specific backend service or a backend bucket), this decision is done based on the rules defined in the URL map after the request forwarded by the forwarding rule and the HTTP(S) proxy. With this approach, the global HTTP(S) load balancer can use a single URL map to route requests to different destinations based on pre-configured rules at the URL map level. Figure 5 illustrates the architecture components of a URL map and where it fits in the overall global external HTTP(S) load balancer architecture. also, The URL map is where the advanced traffic management can be configured in which additional match conditions can be used.

With this approach you will have the flexibility to design your load balancing solution to behave and distribute the traffic based on different requirements, including but not limited to:

- Proximity based routing in which the load balancer at the GFE level can redirect traffic to the closest instances’ group to the traffic source that has the capacity to handle the traffic (Cross-region load balancing when cloud global or global classic load balncer in Premium Tier is used ).

- Routing of traffic based on URL content, for instance requests for certain parts of the application. For example, multimedia can be redirected to instance groups with higher capacity while traffic distended to static content can be served from Cloud CDN to enhance user experience and lower latency. The URL map performs this by using the hostname and path parts within each URL it processes. Such processing can offer Header-based and parameter-based routing in which the load balancer makes traffic routing decisions based on HTTP headers and URL query parameters, which ultimately helps to simplify your cloud architecture, as you don’t need to deploy additional tiers of proxies to do this type of routing. As a result you can use the Google Cloud Global HTTP(S) in many different use cases, especially when advanced traffic management is used, including:

- A/B testing

- Redirecting users’ traffic to different sets of services running on backends

- Provide geo-location related content or device type based content, by delivering different pages and experiences based on different categories of devices or geo-location from which the requests originate

- Backend services: it’s a logical grouping of the actual application instances, the backend service along with the associated health checks, and balancing mode that helps to determine which instance(s) is healthy, or over utilized (CPU utilization, request per second per instance), and when to trigger auto scaling. From configuration point of view, the load balancing service needs to be configured to route requests to your backend service. For more information refer to the backend services overview document.

- Backends: refers to the endpoints that receive traffic from a Google Cloud load balancer. The backend can be an instance group to add and manage virtual machines based on either using a managed instance group, with or without autoscaling, or it can be an unmanaged instance group. Or it can be based on Network Endpoint Groups NEGs which powers multiple use cases such as containerized application that provide container native load balancing, hybrid architecture to send traffic to on-prems and other clouds as well as serverless application using Cloud Run, App Engine, Cloud Functions, or API Gateway service.

Note: you may enable HTTP/3 where applicable, on your load balancer to improve web page load times and throughput on higher latency connections.

Summary

Google Cloud offers several options of load balancing to simplify the design of different use cases. With the global external HTTP(S) load balancing there are two types or modes that this load balancing offers. Therefore, as an architect or designer you need first to understand the targeted solution and application requirements to help you make the optimal design decision, in terms of which type of load balancer to select. Also, Google Cloud load balancing offers simple to very advanced and sophisticated designs and use cases. As a general design recommendation, always start with a simple and specific use case, and then you can add more capabilities and advancements to it in terms of defining advanced rules and policies.

By: Marwan Al shawi (Partner Customer Engineer, Google Cloud – Dubai) and Ammett Williams (Developer Relations Engineer)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!