It is my pleasure to announce a new open source project for the Swift Server ecosystem, Swift Cluster Membership. This library aims to help Swift grow in a new space of server applications: clustered multi-node distributed systems. With this library we provide reusable runtime-agnostic membership protocol implementations which can be adopted in various clustering use-cases.

Background

Cluster membership protocols are a crucial building block for distributed systems, such as computation intensive clusters, schedulers, databases, key-value stores and more. With the announcement of this package, we aim to make building such systems simpler, as they no longer need to rely on external services to handle service membership for them. We would also like to invite the community to collaborate on and develop additional membership protocols.

From our partners:

At their core, membership protocols need to provide an answer for the question “Who are my (live) peers?”. This seemingly simple task turns out to be not so simple at all in a distributed system where delayed or lost messages, network partitions, and unresponsive but still “alive” nodes are the daily bread and butter. Providing a predictable, reliable answer to this question is what cluster membership protocols do.

There are various trade-offs one can take while implementing a membership protocol, and it continues to be an interesting area of research and continued refinement. As such, the cluster-membership package intends to focus not on a single implementation, but serve as a collaboration space for various distributed algorithms in this space.

Today, along with the initial release of this package, we’re open sourcing an implementation of one such membership protocol: SWIM.

??♀️??♀️??♂️??♂️ SWIMming with Swift

The first membership protocol we are open sourcing is an implementation of the Scalable Weakly-consistent Infection-style process group Membership protocol (or “SWIM”), along with a few notable protocol extensions as documented in the 2018 Lifeguard: Local Health Awareness for More Accurate Failure Detection paper.

SWIM is a gossip protocol in which peers periodically exchange bits of information about their observations of other nodes’ statuses, eventually spreading the information to all other members in a cluster. This category of distributed algorithms are very resilient against arbitrary message loss, network partitions and similar issues.

At a high level, SWIM works like this:

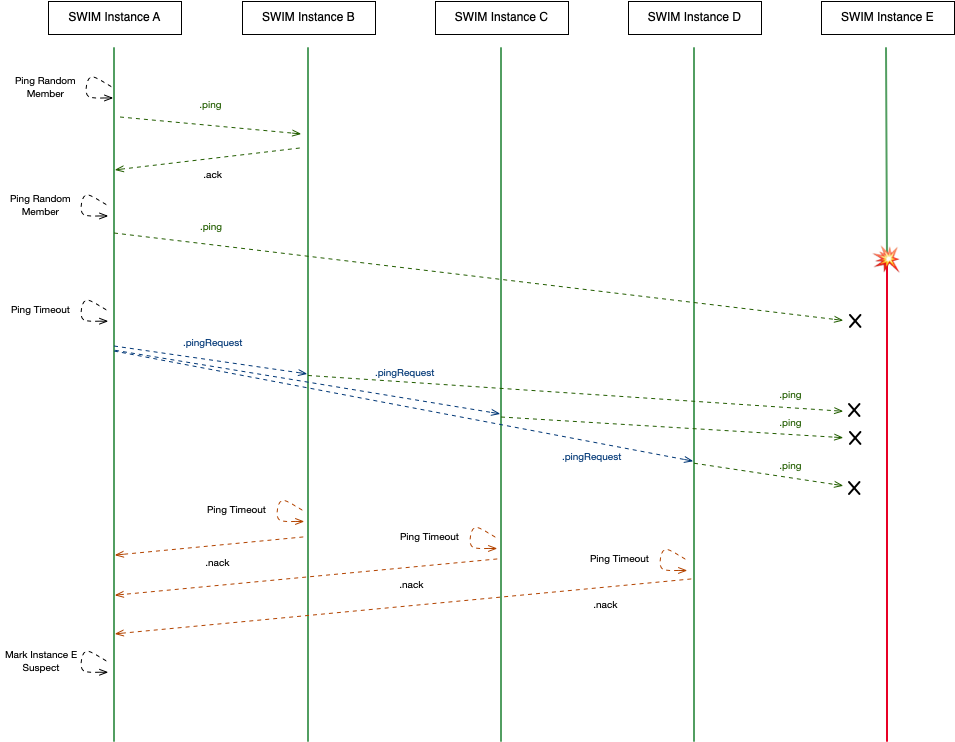

- A member periodically pings a randomly selected peer it is aware of. It does so by sending a

.pingmessage to that peer, expecting an.ackto be sent back. See howAprobesBinitially in the diagram below.- The exchanged messages also carry a gossip payload, which is (partial) information about what other peers the sender of the message is aware of, along with their membership status (

.alive,.suspect, etc.)

- The exchanged messages also carry a gossip payload, which is (partial) information about what other peers the sender of the message is aware of, along with their membership status (

- If it receives an

.ack, the peer is considered still.alive. Otherwise, the target peer might have terminated/crashed or is unresponsive for other reasons.- In order to double check if the peer really is down, the origin asks a few other peers about the state of the unresponsive peer by sending

.pingRequestmessages to a configured number of other peers, which then issue direct pings to that peer (probing peerEin the diagram below).

- In order to double check if the peer really is down, the origin asks a few other peers about the state of the unresponsive peer by sending

- If those pings fail, the origin peer would receive

.nack(“negative acknowledgement”) messages back, and due to lack of.acks resulting in the peer being marked as.suspect.

The above mechanism, serves not only as a failure detection mechanism, but also as a gossip mechanism, which carries information about known members of the cluster. This way members eventually learn about the status of their peers, even without having them all listed upfront. It is worth pointing out however that this membership view is weakly-consistent, which means there is no guarantee (or way to know, without additional information) if all members have the same exact view on the membership at any given point in time. However, it is an excellent building block for higher-level tools and systems to build their stronger guarantees on top.

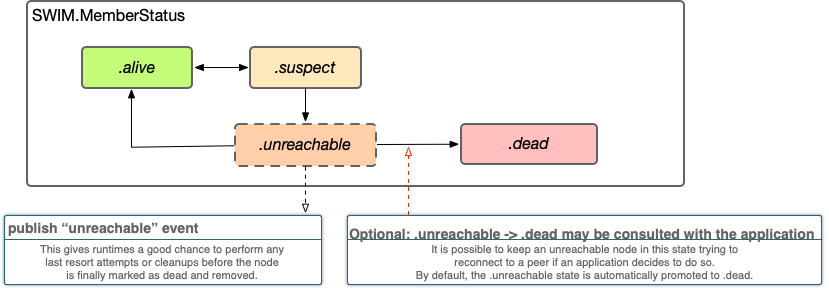

Once the failure detection mechanism detects an unresponsive node, it eventually is marked as .dead resulting in its irrevocable removal from the cluster. Our implementation offers an optional extension, adding an .unreachable state to the possible states, however most users will not find it necessary and it is disabled by default. For details and rules about legal status transitions refer to SWIM.Status or the following diagram:

The way Swift Cluster Membership implements protocols, is by offering “Instances” of them. For example, the SWIM implementation is encapsulated in the runtime agnostic SWIM.Instance which needs to be “driven” or “interpreted” by some glue code between a networking runtime and the instance itself. We call those glue pieces of an implementation “Shells”, and the library ships with a SWIMNIOShell implemented using SwiftNIO’s DatagramChannel that performs all messaging asynchronously over UDP. Alternative implementations can use completely different transports, or piggy back SWIM messages on some other existing gossip system etc.

The SWIM instance also has built-in support for emitting metrics (using swift-metrics) and can be configured to log internal details by passing a swift-log Logger.

Example: Reusing the SWIM protocol logic implementation

The primary purpose of this library is to share the SWIM.Instance implementation across various implementations which need some form of in-process membership service. Implementing a custom runtime is documented in depth in the project’s README, so please have a look there if you are interested in implementing SWIM over some different transport.

Implementing a new transport boils down to a “fill in the blanks” exercise:

First, one has to implement the Peer protocols using one’s target transport:

<span class="kd">public</span> <span class="kd">protocol</span> <span class="kt">SWIMPeer</span><span class="p">:</span> <span class="kt">SWIMAddressablePeer</span> <span class="p">{</span>

<span class="kd">func</span> <span class="nf">ping</span><span class="p">(</span>

<span class="nv">payload</span><span class="p">:</span> <span class="kt">SWIM</span><span class="o">.</span><span class="kt">GossipPayload</span><span class="p">,</span>

<span class="n">from</span> <span class="nv">origin</span><span class="p">:</span> <span class="kt">SWIMAddressablePeer</span><span class="p">,</span>

<span class="nv">timeout</span><span class="p">:</span> <span class="kt">DispatchTimeInterval</span><span class="p">,</span>

<span class="nv">sequenceNumber</span><span class="p">:</span> <span class="kt">SWIM</span><span class="o">.</span><span class="kt">SequenceNumber</span><span class="p">,</span>

<span class="nv">onComplete</span><span class="p">:</span> <span class="kd">@escaping</span> <span class="p">(</span><span class="kt">Result</span><span class="o"><</span><span class="kt">SWIM</span><span class="o">.</span><span class="kt">PingResponse</span><span class="p">,</span> <span class="kt">Error</span><span class="o">></span><span class="p">)</span> <span class="o">-></span> <span class="kt">Void</span>

<span class="p">)</span>

<span class="c1">// ...</span>

<span class="p">}</span>

Which usually means wrapping some connection, channel, or other identity with the ability to send messages and invoke the appropriate callbacks when applicable.

Then, on the receiving end of a peer, one has to implement receiving those messages and invoke all the corresponding on<SomeMessage> callbacks defined on the SWIM.Instance (grouped under SWIMProtocol). These calls perform all SWIM protocol specific tasks internally, and return directives which are simple to interpret “commands” to an implementation about how it should react to the message. For example, upon receiving a PingRequest message, the returned directive may instruct a shell to .sendPing(target:pingRequestOrigin:timeout:sequenceNumber) which can be simply implemented by invoking this ping on the target peer.

Example: SWIMming with SwiftNIO

The repository contains an end-to-end example and an example implementation called SWIMNIOExample which makes use of the SWIM.Instance to enable a simple UDP based peer monitoring system. This allows peers to gossip and notify each other about node failures using the SWIM protocol by sending datagrams driven by SwiftNIO.

The SWIMNIOExample implementation is offered only as an example, and has not been implemented with production use in mind, however with some amount of effort it could definitely do well for some use-cases. If you are interested in learning more about cluster membership algorithms, scalability benchmarking and using SwiftNIO itself, this is a great module to get your feet wet, and perhaps once the module is mature enough we could consider making it not only an example, but a reusable component for SwiftNIO based clustered applications.

In it’s simplest form, combining the provided SWIM instance and SwiftNIO shell to build a simple server, one can embedd the provided handlers like shown below, in a typical SwiftNIO channel pipeline:

<span class="k">let</span> <span class="nv">bootstrap</span> <span class="o">=</span> <span class="kt">DatagramBootstrap</span><span class="p">(</span><span class="nv">group</span><span class="p">:</span> <span class="n">group</span><span class="p">)</span>

<span class="o">.</span><span class="n">channelInitializer</span> <span class="p">{</span> <span class="n">channel</span> <span class="k">in</span>

<span class="n">channel</span><span class="o">.</span><span class="n">pipeline</span>

<span class="c1">// first install the SWIM handler, which contains the SWIMNIOShell:</span>

<span class="o">.</span><span class="nf">addHandler</span><span class="p">(</span><span class="kt">SWIMNIOHandler</span><span class="p">(</span><span class="nv">settings</span><span class="p">:</span> <span class="n">settings</span><span class="p">))</span><span class="o">.</span><span class="n">flatMap</span> <span class="p">{</span>

<span class="c1">// then install some user handler, it will receive SWIM events:</span>

<span class="n">channel</span><span class="o">.</span><span class="n">pipeline</span><span class="o">.</span><span class="nf">addHandler</span><span class="p">(</span><span class="kt">SWIMNIOExampleHandler</span><span class="p">())</span>

<span class="p">}</span>

<span class="p">}</span>

<span class="n">bootstrap</span><span class="o">.</span><span class="nf">bind</span><span class="p">(</span><span class="nv">host</span><span class="p">:</span> <span class="n">host</span><span class="p">,</span> <span class="nv">port</span><span class="p">:</span> <span class="n">port</span><span class="p">)</span>

The example handler can then receive and handle SWIM cluster membership change events:

<span class="kd">final</span> <span class="kd">class</span> <span class="kt">SWIMNIOExampleHandler</span><span class="p">:</span> <span class="kt">ChannelInboundHandler</span> <span class="p">{</span>

<span class="kd">public</span> <span class="kd">typealias</span> <span class="kt">InboundIn</span> <span class="o">=</span> <span class="kt">SWIM</span><span class="o">.</span><span class="kt">MemberStatusChangedEvent</span>

<span class="k">let</span> <span class="nv">log</span> <span class="o">=</span> <span class="kt">Logger</span><span class="p">(</span><span class="nv">label</span><span class="p">:</span> <span class="s">"SWIMNIOExampleHandler"</span><span class="p">)</span>

<span class="kd">public</span> <span class="kd">func</span> <span class="nf">channelRead</span><span class="p">(</span><span class="nv">context</span><span class="p">:</span> <span class="kt">ChannelHandlerContext</span><span class="p">,</span> <span class="nv">data</span><span class="p">:</span> <span class="kt">NIOAny</span><span class="p">)</span> <span class="p">{</span>

<span class="k">let</span> <span class="nv">change</span> <span class="o">=</span> <span class="k">self</span><span class="o">.</span><span class="nf">unwrapInboundIn</span><span class="p">(</span><span class="n">data</span><span class="p">)</span>

<span class="k">self</span><span class="o">.</span><span class="n">log</span><span class="o">.</span><span class="nf">info</span><span class="p">(</span>

<span class="s">"""

Membership status changed: [</span><span class="se">\(</span><span class="n">change</span><span class="o">.</span><span class="n">member</span><span class="o">.</span><span class="n">node</span><span class="se">)</span><span class="s">]</span><span class="err">\</span><span class="s">

is now [</span><span class="se">\(</span><span class="n">change</span><span class="o">.</span><span class="n">status</span><span class="se">)</span><span class="s">]

"""</span><span class="p">,</span>

<span class="nv">metadata</span><span class="p">:</span> <span class="p">[</span>

<span class="s">"swim/member"</span><span class="p">:</span> <span class="s">"</span><span class="se">\(</span><span class="n">change</span><span class="o">.</span><span class="n">member</span><span class="o">.</span><span class="n">node</span><span class="se">)</span><span class="s">"</span><span class="p">,</span>

<span class="s">"swim/member/status"</span><span class="p">:</span> <span class="s">"</span><span class="se">\(</span><span class="n">change</span><span class="o">.</span><span class="n">status</span><span class="se">)</span><span class="s">"</span><span class="p">,</span>

<span class="p">]</span>

<span class="p">)</span>

<span class="p">}</span>

<span class="p">}</span>

What’s next?

This project is currently in a pre-release state, and we’d like to give it some time to bake before tagging a stable release. We are mostly focused on the quality and correctness of the SWIM.Instance, however there also remain small cleanups to be done in the example Swift NIO implementation. Once we have confirmed that a few use-cases are confident in the SWIM implementation’s API we’d like to tag an 1.0 release.

From there onwards, we would like to continue investigating additional membership implementations as well as minimizing the overheads of the existing implementation.

Additional Resources

Additional documentation and examples can be found on GitHub.

Getting Involved

If you are interested in cluster membership protocols, please get involved! Swift Cluster Membership is a fully open-source project, developed on GitHub. Contributions from the open source community are welcome at all times. We encourage discussion on the Swift forums. For bug reports, feature requests, and pull requests, please use the GitHub repository.

We’re very excited to see what amazing things you do with this library!

By

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!