Hi everyone!

I spent my summer as a Developer Relations Engineer Intern on Google’s Android DevRel team. Joining Google, I was ecstatic about the opportunity to have an impact in the developer community. In this blog post, I will be discussing modern approaches to creating Android media apps through my experience in converting the Universal Android Media Player (UAMP) media playback sample app to Compose, updating it to use modern libraries such as Media3.

From our partners:

Starting From Scratch

As someone completely new to Android development with a Java background, learning Kotlin was pleasantly trivial. Likewise, I was able to rely on my knowledge of declarative frameworks such as React to learn Jetpack Compose, Android’s modern UI toolkit. Here are some similarities I noticed:

- Just like React, Compose allows developers to construct UIs and simultaneously see what they are creating using Compose’s preview feature.

- The syntax of Compose is similar to React, but much cleaner given that all of the markup, such as strings for different languages, is hidden away in an app’s resource files and is referenced using the appropriate resource functions.

class ExampleButton extends React.Component {

handleClick() {

//your onclick code here

}

render() {

return (

<button onClick={() => this.handleClick()}>

Click me

</button>

);

}

}

@Composable

fun ExampleButton() {

Button(onClick = {

//your onclick code here

}) {

//button_text would be defined in the app's res/values/strings.xml

Text(text = stringResource(R.string.button_text))

}

}

Ramping Up On UAMP

My goal was to transform UAMP to incorporate Modern Android Development (MAD) principles. To get started, I needed to understand the architecture of Android media apps to become familiar with how UAMP is implemented.

Here is the overall architecture of UAMP:

MusicService:

Recently, UAMP was migrated to use the new Jetpack Media3 library, which enables Android apps to create rich audio and visual experiences. Media3 consolidates legacy APIs such as Jetpack Media (a.k.a MediaCompat), Jetpack Media2, and Exoplayer2. It introduces a simpler architecture that is familiar to current developers, and which allows you to more easily build and maintain your apps.

MusicService represents the “server” aspect or the “back-end” of UAMP.

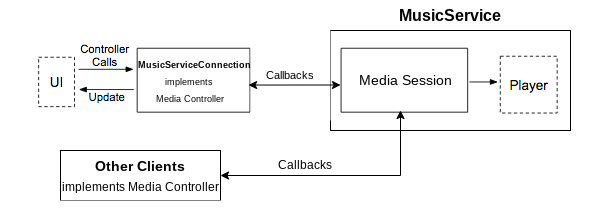

Here is a diagram that describes MusicService:

MusicService is made up of two components, <a class="au ml" href="https://github.com/androidx/media/blob/50475814f700d08519c88585c9583f2aba5d702e/libraries/session/src/main/java/androidx/media3/session/MediaSession.java" target="_blank" rel="noopener ugc nofollow">MediaSession</a> and the Player. A MediaSession represents an ongoing media playback session. It provides various mechanisms for controlling playback, receiving status updates, and retrieving metadata about the current media. UAMP uses ExoPlayer, Media3’s default Player implementation, for playback.

A <a class="au ml" href="https://github.com/androidx/media/blob/release/libraries/session/src/main/java/androidx/media3/session/MediaController.java" target="_blank" rel="noopener ugc nofollow">MediaController</a> is used to communicate with the MediaSession. The MediaController, which is implemented by MusicServiceConnection in UAMP and may also be implemented by other clients, is used to communicate with the MediaSession.

To learn more about Media3, check out the Media3 Documentation.

UI:

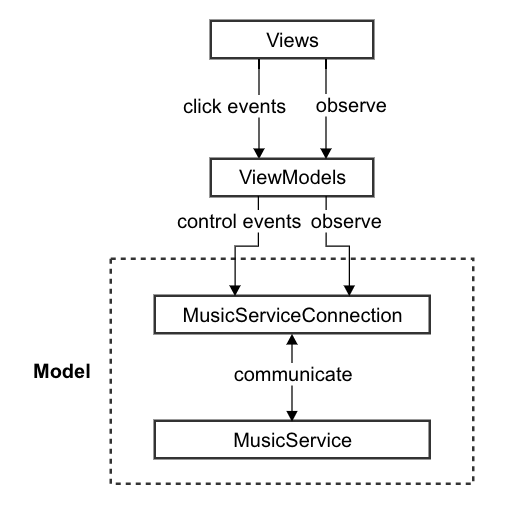

UAMP uses the Model-View-ViewModel Architecture to allow for abstraction and a division of responsibility between each layer.

My primary task in converting UAMP to Compose was to rewrite the Views as Compose screens, connecting them to their respective ViewModels.

UAMP has three main UI classes — one Activity and two Fragments:

<a class="au ml" href="https://github.com/android/uamp/blob/2cb3b89c62b9b3b882468ef903d101280d06f5aa/app/src/main/java/com/example/android/uamp/MainActivity.kt" target="_blank" rel="noopener ugc nofollow">MainActivity</a>is responsible for swapping between the two fragments.<a class="au ml" href="https://github.com/android/uamp/blob/2cb3b89c62b9b3b882468ef903d101280d06f5aa/app/src/main/java/com/example/android/uamp/fragments/MediaItemFragment.kt" target="_blank" rel="noopener ugc nofollow">MediaItemFragment</a>is responsible for browsing the music catalog. It displays a list of media items which can be either albums or songs. Tapping an album will display a newMediaItemFragmentcontaining the songs within that album. Tapping a song will start playing that song and display theNowPlayingFragment.<a class="au ml" href="https://github.com/android/uamp/blob/2cb3b89c62b9b3b882468ef903d101280d06f5aa/app/src/main/java/com/example/android/uamp/fragments/NowPlayingFragment.kt" target="_blank" rel="noopener ugc nofollow">NowPlayingFragment</a>displays the song that is currently playing.

The two fragments will be converted to Compose while keeping MainActivity more or less untouched for purposes of the conversion.

Composing a new UI

UAMP uses both view binding and data binding to interact with Views.

View binding allows you to more easily write code that interacts with views, and is not needed in Compose. Data binding allows you to bind UI components in your layouts to data sources in your app using a declarative (rather than programmatic) format.

Here are three approaches to converting an app that uses view binding to Compose:

- Delete the adapters (e.g. MediaItemAdapter in UAMP) used to set up the binding and use those values to populate Composables instead. This approach is efficient in that you don’t have to change the underlying framework set up by the respective Fragment and the code can be redirected to use Composables.

- If your app uses Fragments, deleting the Fragments and creating the screens from scratch is another approach that would work, although the approach may be a bit more cumbersome given that the Fragment implementation may contain some logic which will need to be reimplemented in Composable functions.

- To keep the view bindings, one approach would be to have only some parts of the UI being implemented in Compose. Views would continue to be accessed by using their respective View functions, such as

binding.view_id_name.setText(). The Composables can be accessed throughbinding.composeview_id_name.apply { /* All the Compose code here */ }.

I chose to delete the MediaItemAdapter and keep the fragments so that I could re-use the business logic already implemented in UAMP. This was possible because reactive frameworks such as Compose eliminate the need to bind views.

For data binding, I used the observeAsState function to bind composables to LiveData. Whenever there is a change in the associated LiveData, the Compose screen is recomposed and the changes are propagated.

@Composable

private fun NowPlaying(...) {

val position: Long by nowPlayingFragmentViewModel.mediaPositionSeconds.observeAsState(0)

...

// Updates posted to mediaPositionSeconds will result in the screen being recomposed

Text(text = position, …)

...

}

With this done, I started converting components of the UI to Compose. For example, I changed the RecyclerView to a LazyColumn.

<androidx.recyclerview.widget.RecyclerView

android:id="@+id/list"

android:name="com.example.android.uamp.MediaItemFragment"

style="@style/MediaItemList"

android:layout_width="match_parent"

android:layout_height="match_parent"

android:orientation="vertical"

app:layoutManager="androidx.recyclerview.widget.LinearLayoutManager"

tools:context="com.example.android.uamp.fragments.MediaItemFragment"

tools:listitem="@layout/fragment_mediaitem" />

class MediaItemFragment : Fragment() {

override fun onActivityCreated() {

...

mediaItemFragmentViewModel.mediaItems.observe(viewLifecycleOwner,

Observer { list ->

binding.loadingSpinner.visibility =

if (list?.isNotEmpty() == true) View.GONE else View.VISIBLE

listAdapter.submitList(list)

})

...

binding.list.adapter = listAdapter

}

}

LazyColumn(modifier = Modifier.fillMaxSize()) {

for (mediaItem in mediaItems) {

item { MediaItem(mediaItem, mainActivityViewModel) }

}

}

The last step was to return a ComposeView in the fragments’ onCreateView functions (for example, see MediaListFragment’s onCreateView function here) to display the relevant screen. The screens contain the Composable functions which define the UI and handlers which connect the screens to the business logic embedded in the view models.

Icon(

painter = if (isPlaying) pause else play,

contentDescription = contentDesc,

//define onClick handler which calls playMedia method to play/pause the now playing media item

modifier = Modifier.clickable {

mainActivityViewModel.playMedia(mediaItem)}

.width(buttonWidth),

tint = MaterialTheme.colors.buttonColor

)

I also created a Compose screen, <a class="au ml" href="https://github.com/android/uamp/blob/2cb3b89c62b9b3b882468ef903d101280d06f5aa/app/src/main/java/com/example/android/uamp/fragments/NowPlayingScreen.kt" target="_blank" rel="noopener ugc nofollow">NowPlayingScreen</a>, which uses Composables that individually display relevant metadata about the currently playing item, such as album art, title, & duration.

Surrounded By New Features:

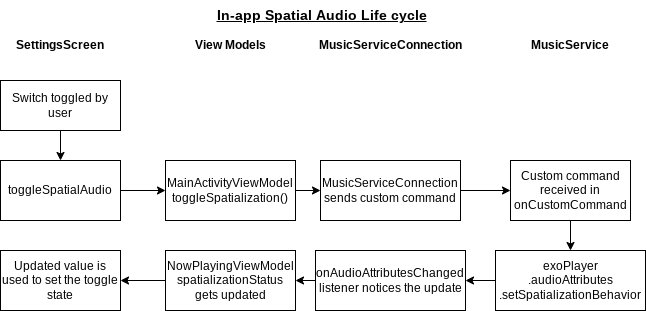

Media3 makes it easy to adopt new features like spatial audio, which is a feature that makes your app play sound as though it is coming from virtual speakers placed around the listener for a more realistic experience. With the recent release of Media3 1.0.0-beta02, ExoPlayer is updated to configure the platform for multi-channel spatial audio.

To incorporate spatial audio I created a settings screen, which would allow the user to toggle in-app spatial audio and retrieve information from the Spatializer API for the currently playing media item. Here is how the in-app toggle control was implemented:

5 Code snippets to describe the process.

@Composable

fun SettingsScreenDescription(

nowPlayingFragmentViewModel: NowPlayingFragmentViewModel,

mainActivityViewModel: MainActivityViewModel,

navController: NavController

) {

val spatializationStatus: Boolean by nowPlayingFragmentViewModel.spatializationStatus

.observeAsState(true)

Column(...) {

// composable

Column(...) {

Row(...) {

// composable

Switch(

checked = spatializationStatus,

onCheckedChange = {

toggleSpatialAudio(mainActivityViewModel, it)

},

// ...

)

}

}

}

}

fun toggleSpatialAudio(mainActivityViewModel: MainActivityViewModel, enable: Boolean) {

mainActivityViewModel.viewModelScope.launch {

mainActivityViewModel.toggleSpatialization(enable)

}

}

suspend fun toggleSpatialization(enable: Boolean) {

val bundle = Bundle().apply {

putBoolean(EXTRAS_TOGGLE_SPATIALIZATION, enable)

}

musicServiceConnection.sendCommand(ACTION_TOGGLE_SPATIALIZATION, bundle)

}

override fun onCustomCommand(

session: MediaSession,

controller: MediaSession.ControllerInfo,

customCommand: SessionCommand,

args: Bundle

): ListenableFuture<SessionResult> {

// Toggle spatial audio

if (customCommand.customAction == ACTION_TOGGLE_SPATIALIZATION) {

val enable = customCommand.customExtras.getBoolean(EXTRAS_TOGGLE_SPATIALIZATION)

uAmpAudioAttributesBuilder.setSpatializationBehavior(

if(enable) {

C.SPATIALIZATION_BEHAVIOR_AUTO

} else {

C.SPATIALIZATION_BEHAVIOR_NEVER

}

)

exoPlayer.setAudioAttributes(uAmpAudioAttributesBuilder.build(), true)

return Futures.immediateFuture(SessionResult(SessionResult.RESULT_SUCCESS))

}

...

}

<em class="jw">spatializationBehavior</em> audio attribute is set for ExoPlayer instanceval isAppSpatializationEnabled = MutableLiveData<Boolean>(true)

private fun updateAppSpatializationStatus(audioAttributes: AudioAttributes) {

isAppSpatializationEnabled

.postValue(

audioAttributes.spatializationBehavior

== C.SPATIALIZATION_BEHAVIOR_AUTO

)

}

private inner class PlayerListener : Player.Listener {

override fun onAudioAttributesChanged(audioAttributes: AudioAttributes) {

super.onAudioAttributesChanged(audioAttributes)

updateAppSpatializationStatus(audioAttributes)

}

}

// Boolean value to indicate the current status of spatialization

val spatializationStatus = MutableLiveData<Boolean>(true)

private val isAppSpatializationEnabledObserver = Observer<Boolean> {

updateState(

musicServiceConnection.playbackState.value!!,

musicServiceConnection.nowPlaying.value!!, it

)

}

private val musicServiceConnection = musicServiceConnection.also {

...

it.isAppSpatializationEnabled.observeForever(isAppSpatializationEnabledObserver)

...

}

private fun updateState(

playbackState: PlaybackState,

mediaItem: MediaItem,

isAppSpatializationEnabled: Boolean

) {

...

spatializationStatus.postValue(isAppSpatializationEnabled)

}

Reflecting on Compose and Media3:

Compose was a great way for me to get into Android Development because I was able to rely on my experience with other declarative frameworks and simplify the code I wrote. Being able to interoperate Compose with XML made it seamless to convert UAMP fragments to Compose screens, since I could take advantage of the underlying business logic already being used for Views. Compose also allowed me to “see what I coded” by using the @Preview annotation to visualize changes in composables and attributes in a real-time UI preview. Since Compose took care of state management such as view bindings, I was able to focus solely on making UAMP’s UI beautiful and interactive.

New libraries are actively being introduced to Compose to improve current functionality and add new features. To get involved, you can join the Compose community and share your feedback. With a thriving developer community and frequent updates, it’s easy to find solutions to problems you may encounter. The community has already made high-quality libraries to address popular use-cases, such as dynamic image fetching. I’d highly encourage any developer, new or old, to at least try out Compose when either creating a new app, adding new screens to an existing app, or by migrating an app to Compose.

Coming back to Media3, the simplicity and compatibility it offers in regards to essentially being a bridge between several Media libraries is a testament to its efficiency and effectiveness. The Media3 library makes it simpler for developers to incorporate modern features such as spatial audio into their apps, all while requiring less code and having a much simpler architecture. From a developer standpoint, Media3 is the one stop shop for all media functionalities you may need.

My Growth:

In working on this project, there are many things I have learned; not just technically but also psychologically as a developer. Right off the bat, learning about Kotlin and the Jetpack libraries allowed me to expand my knowledge and skill set. Delving a bit deeper into my role as a Developer Relations Engineer Intern, I saw the value in Roman Nurik’s “Walk then Talk” philosophy on DevRel which means not only thinking critically about best practices, but also putting myself in the shoes of other developers, whose multitude of use-cases these libraries aim to satisfy. Hopefully I have convinced you of the benefits of adopting modern Android development techniques for your media app.

Modern libraries, such as Compose and Media3, are regularly being released and updated, making it easier for developers to take advantage of new tools and features. While MAD raises the standard, it also reduces the learning curve for new developers to get started in Android development given its simplicity.

Further Reading:

To learn more about UAMP:

https://github.com/android/uamp

To learn more about Compose:

https://developer.android.com/jetpack/compose/

To learn more about Media3:

https://github.com/androidx/media

To learn how to migrate an app to Compose:

https://developer.android.com/codelabs/jetpack-compose-migration#0

By Avish Parmar, Android DevRel Engineer intern

Source Medium

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!