- Execution time: 10x. On average, queries that use BigQuery SEARCH function backed by a search index are 10 times faster than the alternative queries for the common search use cases.

- Processed bytes: 2682x. On average, queries with BigQuery SEARCH function backed by a search index process 2682 times fewer bytes than the alternative queries for the common search use cases.

- Slot usage (BigQuery compute units): 1271x. On average, queries with BigQuery SEARCH function backed by a search index use 1271 times less slot time than the alternative queries for the common search use cases.

Let’s put these numbers into perspective by discussing the common ways search indexes are used in BigQuery. Please note that all improvement numbers provided were derived from a Google engineering team analysis of common use cases and queries on a Google internal test project’s log data. The results may not map directly to customer queries and we would encourage you to test this on your own data set.

From our partners:

Rare term search for analytics on logs

Log analytics is a key industry use case enabled by Google’s Data Cloud. In a recent Google Cloud Next ‘22 talk on Operational Data Lakes, The Home Depot discussed how they were able to sunset their existing enterprise log analytics solution and instead use BigQuery and Looker as an alternative for 1,400+ active users in order to reduce costs and improve log retention. Goldman Sachs used BigQuery to solve their multi-cloud and scaling problems for logging data. Goldman Sachs moved from existing logging solutions to BigQuery to improve long term retention, detect PII in their logs with Google DLP, and implement new cost controls and allocations.

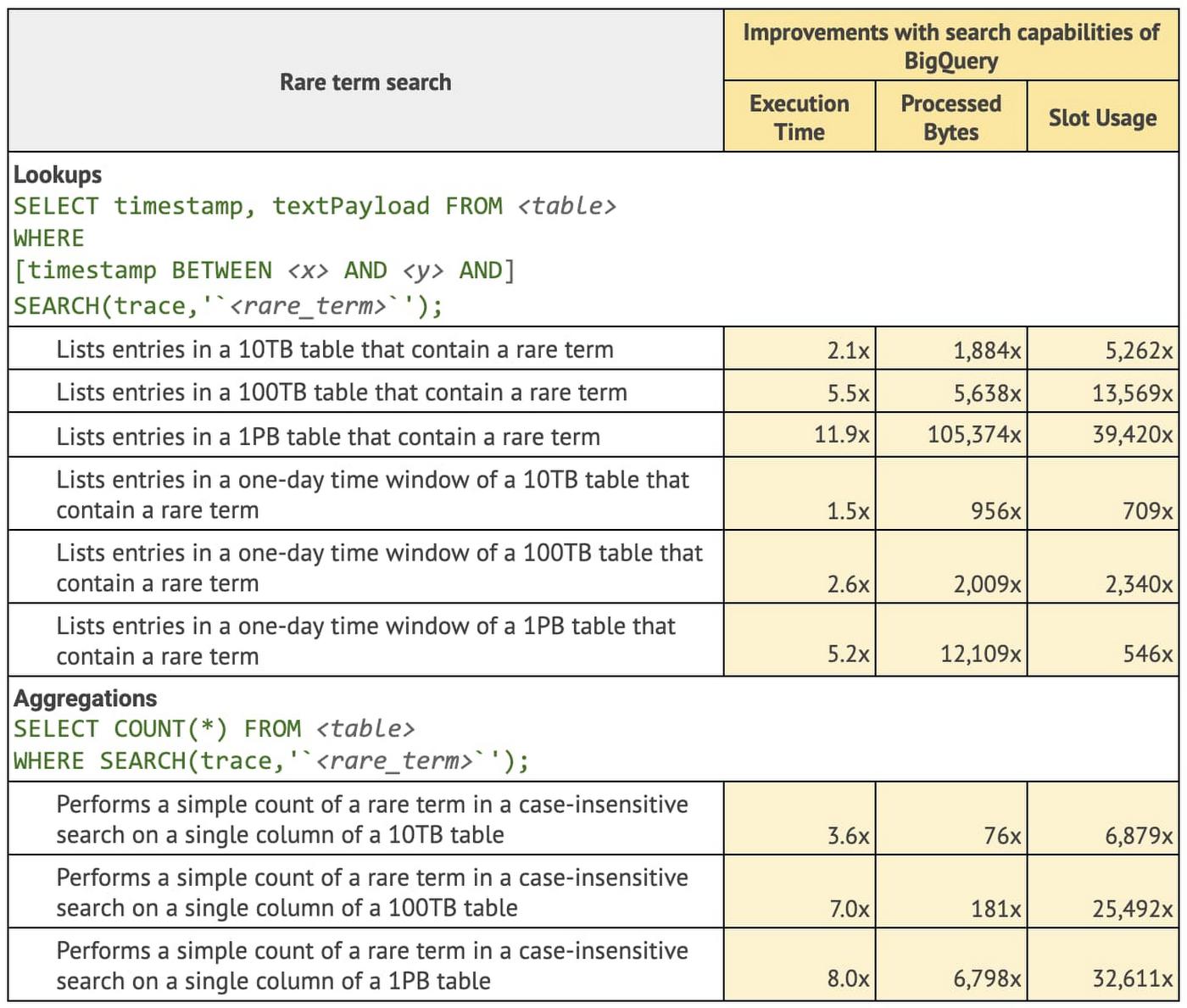

A very common query pattern in analytics on logs is rare-term search or colloquially, “finding a needle in the haystack.” That means quickly searching through millions or billions of rows to identify an exact match to a specific network ID, error code, or user name to troubleshoot an issue or perform a security audit. This is also a quintessential use case for search indexes in a data warehouse. Using a search index on a table of text data allows the BigQuery optimizer to avoid large scanning operations and pinpoint exactly the relevant data required to answer the query.

Let’s review what the Google engineering team found when they reviewed queries that looked for rare terms with and without a search index.

IP address search in Cloud Logging

Home Depot and Goldman Sachs used BigQuery’s basic building blocks to develop their own customized log analytics applications. However, other customers may choose to use log analytics on Google’s Data Cloud as a pre-built integration within Cloud Logging.

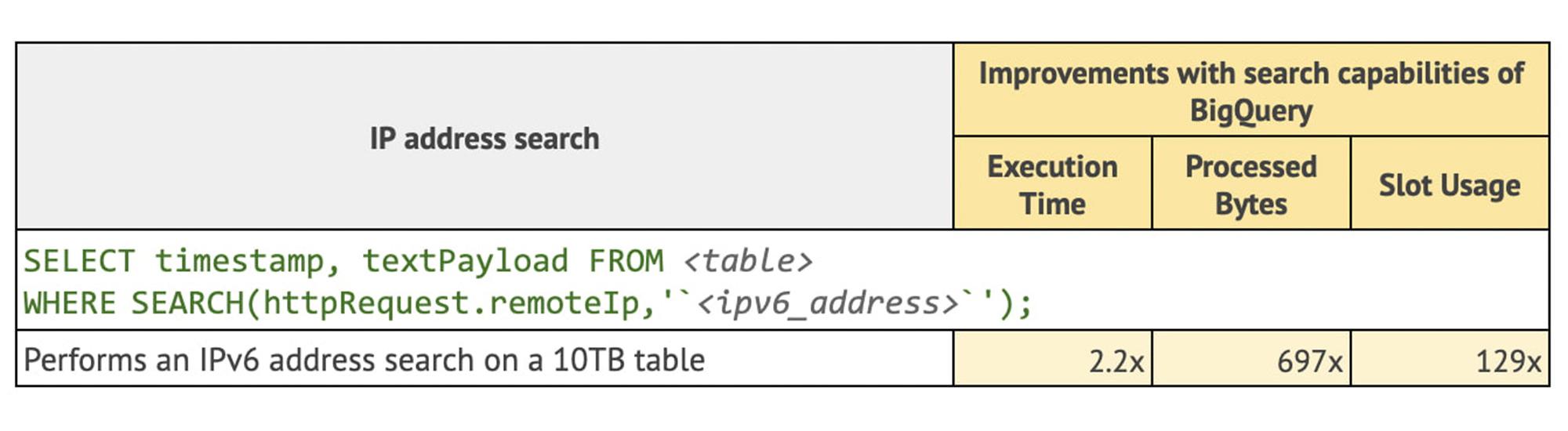

Log Analytics, powered by BigQuery (Preview) gives customers a managed Log Analytics as a service solution with a specialized interface for logs analysis. It leverages features of BigQuery’s search function which provides specialized ways to look up common logging data elements such as IP addresses, URLs, and e-mails. Let’s take a look at what the Google engineering team found when looking up IP addresses using a search function.

Common term search on recent data for security operations

Exabeam, an industry leader in security analytics and SIEM MQ, leverages BigQuery search functions and search indexes in their latest Security Operation Platform built on Google’s Data Cloud to search multi-year data in seconds [learn more data journey interview].

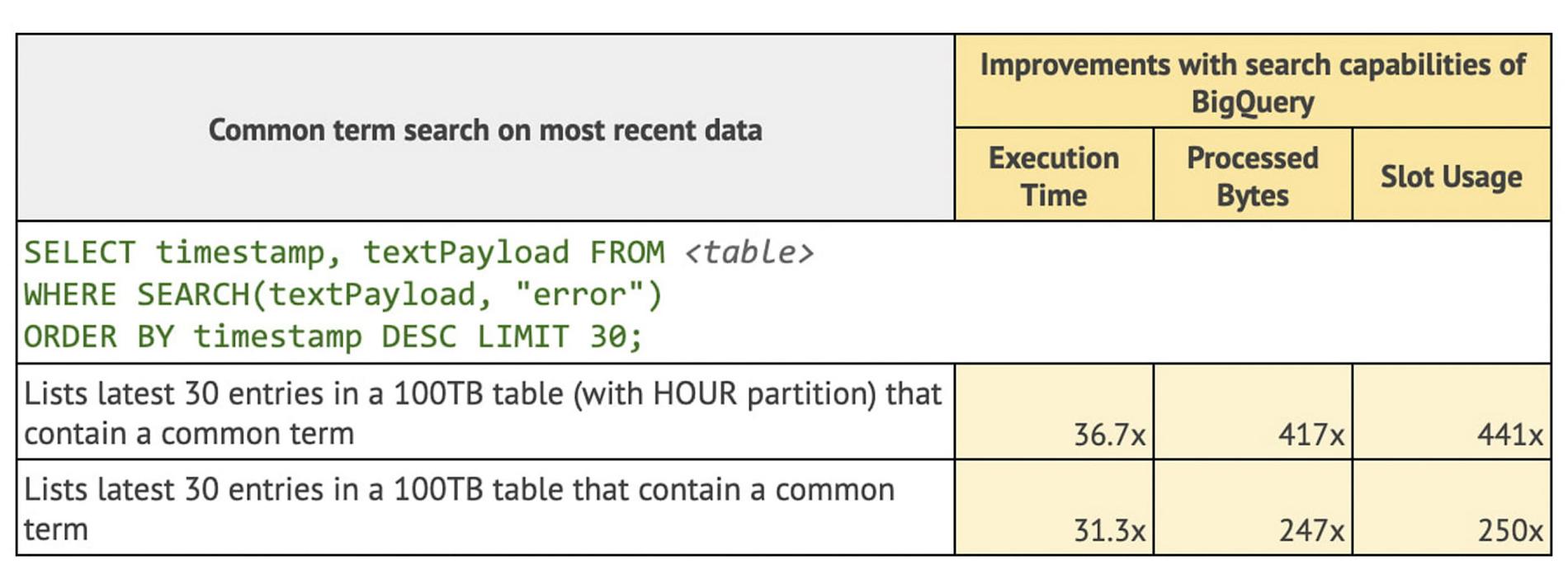

Many security use cases are able to leverage a search optimization for queries on recent data that allows you to look up data with common terms using ORDER BY and LIMIT clauses. Let’s take a look at what the Google engineers found for queries on recent data that use an ORDER BY and LIMIT clauses.

Search in JSON objects for Elasticsearch compatibility

Google technical partner, Mach5 Software, offers its customers an Elasticsearch and OpenSearch-compatible platform powered by BigQuery’s search optimizations and JSON functionality. Using Mach5, customers can migrate familiar tools like Kibana, OpenSearch Dashboards, and pre-built applications, seamlessly to BigQuery, while enjoying a significant reduction in cost and management overhead. Mach5 takes advantage of BigQuery’s search index’s ability to comb through deeply nested data stored in a BigQuery’s native JSON data type. Mach5 Community Edition is freely available for you to deploy and use within your Google Cloud Platform environment.

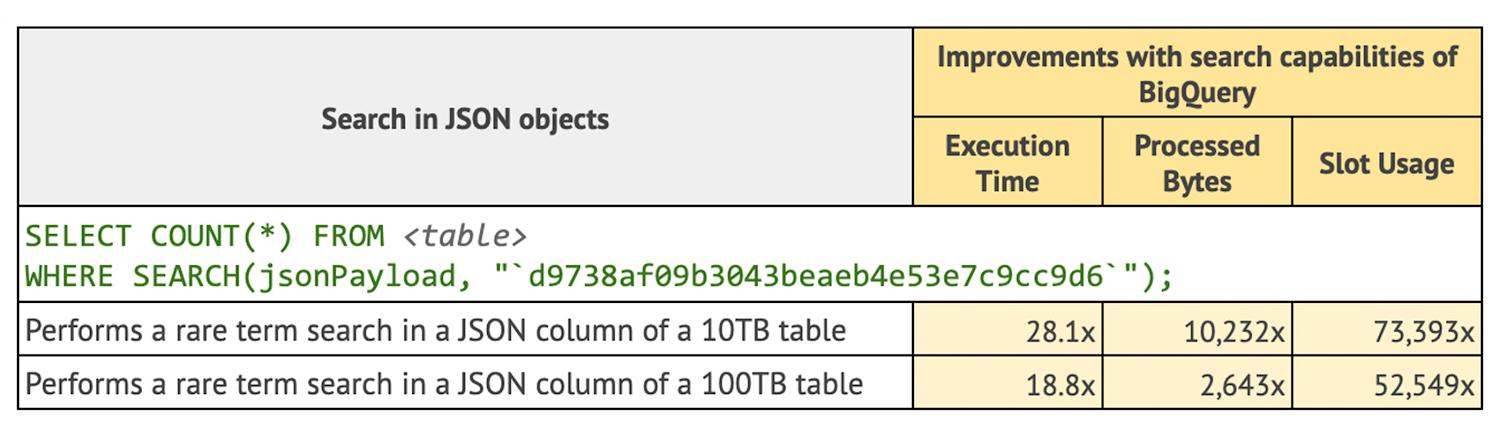

BigQuery’s SEARCH function operates directly on BigQuery’s native JSON type. Let’s look at some improvements the Google engineering team found when using search with indexing on JSON data.

Learning more

As you can see in the comparisons, there are already significant cost and performance improvements with BigQuery search functions and indexes, even at the petabyte level. Generally speaking, the larger the dataset, the more BigQuery can optimize. This means you can bring petabytes of data to BigQuery and still have it operate effectively. Many customers also combine BigQuery search features with large scale streaming pipelines built with BigQuery’s Storage Write API. This Write API has a default ingestion rate of 3GB per second with additional quota available upon request. It is also 50% lower per GB cost compared to previous streaming APIs offered by BigQuery. These streaming pipelines are fully managed by BigQuery and take care of all the operations from stream to index. Once data is available on the stream, any queries you run with a SEARCH function will have accurate and available data.

To learn more about how BigQuery search features can help you build an operational data lake, check out this talk on Modern Security Analytics platforms. To see search in action, you can also watch this demo where a search index is built to improve simple searches of label and object data that is generated from running machine learning on vision data.

You can get started with the BigQuery sandbox and explore these search capabilities at no cost to confirm whether BigQuery fits your needs. The sandbox lets you experience BigQuery and the Google Cloud console without providing a credit card, creating a billing account, or enabling billing for your project.

By: Christopher Crosbie (Product Manager, Data Analytics) and Huong Phan (Software Engineer, Google)

Source: Google Cloud Blog

For enquiries, product placements, sponsorships, and collaborations, connect with us at [email protected]. We'd love to hear from you!

Our humans need coffee too! Your support is highly appreciated, thank you!